mirror of

https://github.com/zeromicro/go-zero.git

synced 2026-05-11 16:59:59 +08:00

Compare commits

52 Commits

copilot/fi

...

v1.9.4

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f29c8612e8 | ||

|

|

35ba024103 | ||

|

|

52df1c532a | ||

|

|

39729f3756 | ||

|

|

5c9ea81db2 | ||

|

|

b284664de4 | ||

|

|

1b76885040 | ||

|

|

eef217522b | ||

|

|

6bd0d169d5 | ||

|

|

3d291328d8 | ||

|

|

858f8ca82e | ||

|

|

4ff3975c5a | ||

|

|

7b23f73268 | ||

|

|

918a7be698 | ||

|

|

0a724447cd | ||

|

|

9e425893a7 | ||

|

|

4de13b6cc8 | ||

|

|

c6f75532fa | ||

|

|

fdf4ccf057 | ||

|

|

b333ed245b | ||

|

|

8f1576df36 | ||

|

|

72dd970969 | ||

|

|

29b65e12c1 | ||

|

|

577a611dc3 | ||

|

|

75941aedd4 | ||

|

|

c7065171d7 | ||

|

|

052de3b552 | ||

|

|

866613af8c | ||

|

|

3d4f6a5e16 | ||

|

|

d1d47d02d5 | ||

|

|

d6c876860b | ||

|

|

98423ca948 | ||

|

|

4e52d77ad8 | ||

|

|

1fc2cfb859 | ||

|

|

942cdae41d | ||

|

|

e9c3607bc6 | ||

|

|

d1603e9166 | ||

|

|

e30317e9c4 | ||

|

|

568f9ce007 | ||

|

|

dcb309065a | ||

|

|

bf8e17a686 | ||

|

|

b2ebbfce62 | ||

|

|

2b10a6a223 | ||

|

|

80c320b46e | ||

|

|

bea9d150a1 | ||

|

|

3f756a2cbf | ||

|

|

bbe5bbb0c0 | ||

|

|

5ad2278a69 | ||

|

|

77763fe748 | ||

|

|

538c4fb5c7 | ||

|

|

315fb2fe0a | ||

|

|

e382887eb8 |

98

.github/copilot-instructions.md

vendored

98

.github/copilot-instructions.md

vendored

@@ -8,14 +8,16 @@ go-zero is a web and RPC framework with lots of built-in engineering practices d

|

||||

|

||||

### Key Architecture Components

|

||||

|

||||

- **REST API framework** (`rest/`) - HTTP service framework with middleware support

|

||||

- **RPC framework** (`zrpc/`) - gRPC-based RPC framework with service discovery

|

||||

- **Core utilities** (`core/`) - Foundational components including:

|

||||

- Circuit breakers, rate limiters, load shedding

|

||||

- Caching, stores (Redis, MongoDB, SQL)

|

||||

- Concurrency control, metrics, tracing

|

||||

- Configuration management

|

||||

- **Code generation tool** (`tools/goctl/`) - CLI tool for generating code from API files

|

||||

- **REST API framework** (`rest/`) - HTTP service framework with middleware chain support

|

||||

- **RPC framework** (`zrpc/`) - gRPC-based RPC framework with etcd service discovery and p2c_ewma load balancing

|

||||

- **Gateway** (`gateway/`) - API gateway supporting both HTTP and gRPC upstreams with proto-based routing

|

||||

- **MCP Server** (`mcp/`) - Model Context Protocol server for AI agent integration via SSE

|

||||

- **Core utilities** (`core/`) - Production-grade components:

|

||||

- Resilience: circuit breakers (`breaker/`), rate limiters (`limit/`), adaptive load shedding (`load/`)

|

||||

- Storage: SQL with cache (`stores/sqlc/`), Redis (`stores/redis/`), MongoDB (`stores/mongo/`)

|

||||

- Concurrency: MapReduce (`mr/`), worker pools (`executors/`), sync primitives (`syncx/`)

|

||||

- Observability: metrics (`metric/`), tracing (`trace/`), structured logging (`logx/`)

|

||||

- **Code generation tool** (`tools/goctl/`) - CLI tool for generating Go code from `.api` and `.proto` files

|

||||

|

||||

## Coding Standards and Conventions

|

||||

|

||||

@@ -25,18 +27,22 @@ go-zero is a web and RPC framework with lots of built-in engineering practices d

|

||||

2. **Package naming**: Use lowercase, single-word package names when possible

|

||||

3. **Error handling**: Always handle errors explicitly, use `errorx.BatchError` for multiple errors

|

||||

4. **Context propagation**: Always pass `context.Context` as the first parameter for functions that may block

|

||||

5. **Configuration structures**: Use struct tags with JSON annotations and default values

|

||||

5. **Configuration structures**: Use struct tags with JSON annotations, defaults, and validation

|

||||

|

||||

Example configuration pattern:

|

||||

**Pattern**: All service configs embed `service.ServiceConf` for common fields (Name, Log, Mode, Telemetry)

|

||||

```go

|

||||

type Config struct {

|

||||

service.ServiceConf // Always embed for services

|

||||

Host string `json:",default=0.0.0.0"`

|

||||

Port int `json:",default=8080"`

|

||||

Timeout int `json:",default=3000"`

|

||||

Optional string `json:",optional"`

|

||||

Port int // Required field (no default)

|

||||

Timeout int64 `json:",default=3000"` // Timeouts in milliseconds

|

||||

Optional string `json:",optional"` // Optional field

|

||||

Mode string `json:",default=pro,options=dev|test|rt|pre|pro"` // Validated options

|

||||

}

|

||||

```

|

||||

|

||||

**Service modes**: `dev`/`test`/`rt` disable load shedding and stats; `pre`/`pro` enable all resilience features

|

||||

|

||||

### Interface Design

|

||||

|

||||

1. **Small interfaces**: Follow Go's preference for small, focused interfaces

|

||||

@@ -94,25 +100,33 @@ func TestSomething(t *testing.T) {

|

||||

|

||||

### REST API Development

|

||||

|

||||

1. **API Definition**: Use `.api` files to define REST APIs

|

||||

2. **Handler pattern**: Separate business logic into logic packages

|

||||

3. **Middleware**: Use built-in middlewares (tracing, logging, metrics, recovery)

|

||||

4. **Response handling**: Use `httpx.WriteJson` for JSON responses

|

||||

5. **Error handling**: Use `httpx.Error` for HTTP error responses

|

||||

1. **API Definition**: Use `.api` files to define REST APIs with goctl codegen

|

||||

2. **Handler pattern**: Separate business logic into logic packages (handlers call logic layer)

|

||||

3. **Middleware chain**: Middlewares wrap via `chain.Chain` interface - use `Append()` or `Prepend()` to control order

|

||||

- Built-in middlewares (all in `rest/handler/`): tracing, logging, metrics, recovery, breaker, shedding, timeout, maxconns, maxbytes, gunzip

|

||||

- Custom middleware: `func(http.Handler) http.Handler` - call `next.ServeHTTP(w, r)` to continue chain

|

||||

4. **Response handling**: Use `httpx.WriteJson(w, code, v)` for JSON responses

|

||||

5. **Error handling**: Use `httpx.Error(w, err)` or `httpx.ErrorCtx(ctx, w, err)` for HTTP error responses

|

||||

6. **Route registration**: Routes defined with `Method`, `Path`, and `Handler` - wildcards use `:param` syntax

|

||||

|

||||

### RPC Development

|

||||

|

||||

1. **Protocol Buffers**: Use protobuf for service definitions

|

||||

2. **Service discovery**: Integrate with etcd for service registration

|

||||

3. **Load balancing**: Use built-in load balancing strategies

|

||||

4. **Interceptors**: Implement interceptors for cross-cutting concerns

|

||||

1. **Protocol Buffers**: Use protobuf for service definitions, generate code with goctl

|

||||

2. **Service discovery**: Use etcd for dynamic service registration/discovery, or direct endpoints for static routing

|

||||

3. **Load balancing**: Default is `p2c_ewma` (power of 2 choices with EWMA), configurable via `BalancerName`

|

||||

4. **Client configuration**: Support `Etcd`, `Endpoints`, or `Target` - use `BuildTarget()` to construct connection string

|

||||

5. **Interceptors**: Implement gRPC interceptors for cross-cutting concerns (auth, logging, metrics)

|

||||

6. **Health checks**: gRPC health checks enabled by default (`Health: true`)

|

||||

|

||||

### Database Operations

|

||||

|

||||

1. **SQL operations**: Use `sqlx` package for database operations

|

||||

2. **Caching**: Implement caching patterns with `cache` package

|

||||

3. **Transactions**: Use proper transaction handling

|

||||

4. **Connection pooling**: Configure appropriate connection pools

|

||||

1. **SQL operations**: Use `sqlx.SqlConn` interface - methods always end with `Ctx` for context support

|

||||

2. **Caching pattern**: `stores/sqlc` provides `CachedConn` for automatic cache-aside pattern

|

||||

- `QueryRowCtx`: Query with cache key, auto-populate on cache miss

|

||||

- `ExecCtx`: Execute and delete cache keys

|

||||

3. **Transactions**: Use `sqlx.SqlConn.TransactCtx()` to get transaction session

|

||||

4. **Connection pooling**: Managed automatically (64 max idle/open, 1min lifetime)

|

||||

5. **Test helpers**: Use `redistest.CreateRedis(t)` for Redis, SQL mocks for DB testing

|

||||

|

||||

Example cache pattern:

|

||||

```go

|

||||

@@ -192,6 +206,36 @@ Always implement proper resource cleanup using defer and context cancellation.

|

||||

- Test: `go test ./...`

|

||||

- Test with race detection: `go test -race ./...`

|

||||

- Format: `gofmt -w .`

|

||||

- Generate code: `goctl api go -api *.api -dir .`

|

||||

- Code generation:

|

||||

- REST API: `goctl api go -api *.api -dir .`

|

||||

- RPC: `goctl rpc protoc *.proto --go_out=. --go-grpc_out=. --zrpc_out=.`

|

||||

- Model from SQL: `goctl model mysql datasource -url="user:pass@tcp(host:port)/db" -table="*" -dir="./model"`

|

||||

|

||||

## Critical Architecture Patterns

|

||||

|

||||

### Resilience Design Philosophy

|

||||

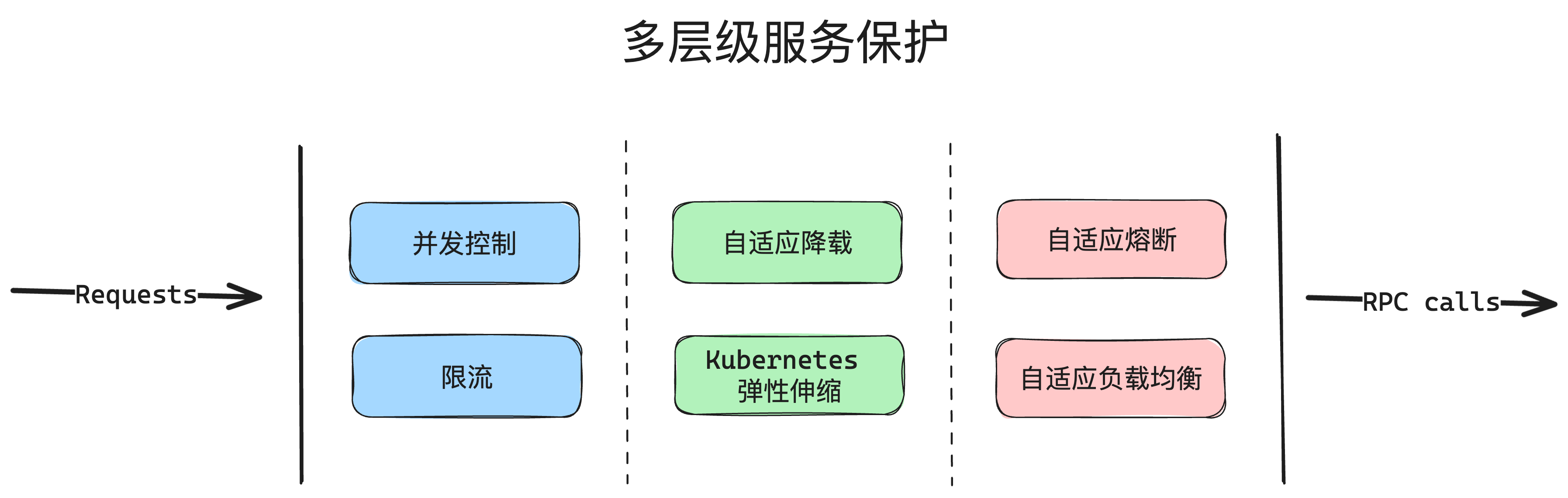

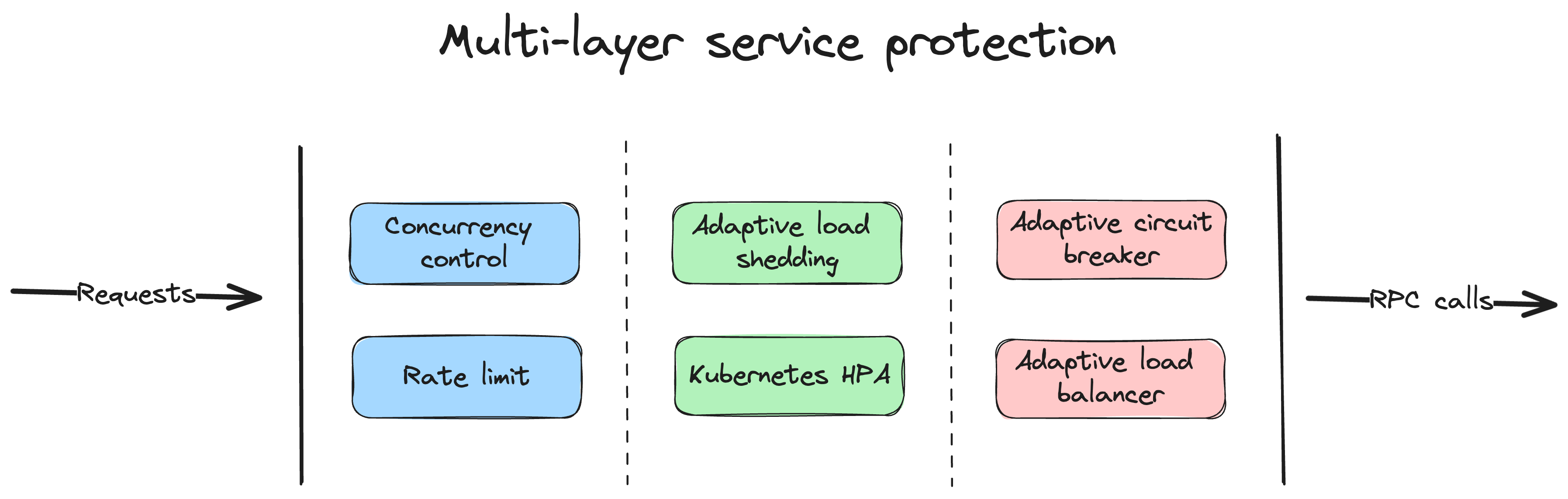

go-zero implements defense-in-depth with multiple protection layers:

|

||||

1. **Circuit Breaker** (`core/breaker`): Google SRE breaker - tracks success/failure, opens on error threshold

|

||||

2. **Adaptive Load Shedding** (`core/load`): CPU-based auto-rejection when system overloaded (disabled in dev/test/rt modes)

|

||||

3. **Rate Limiting** (`core/limit`): Token bucket (Redis-based) and period limiters

|

||||

4. **Timeout Control**: Cascading timeouts via context - set at multiple levels (client, server, handler)

|

||||

|

||||

### Middleware Chain Architecture

|

||||

`rest/chain` provides middleware composition:

|

||||

```go

|

||||

// Middleware signature

|

||||

type Middleware func(http.Handler) http.Handler

|

||||

|

||||

// Chain operations

|

||||

chain := chain.New(m1, m2)

|

||||

chain.Append(m3) // Adds to end: m1 -> m2 -> m3

|

||||

chain.Prepend(m0) // Adds to start: m0 -> m1 -> m2 -> m3

|

||||

handler := chain.Then(finalHandler)

|

||||

```

|

||||

|

||||

### Concurrency Patterns

|

||||

- **MapReduce** (`core/mr`): Parallel processing with worker pools - use for batch operations

|

||||

- **Executors** (`core/executors`): Bulk/period executors for batching operations

|

||||

- **SingleFlight** (`core/syncx`): Deduplicates concurrent identical requests

|

||||

|

||||

Remember to run tests and ensure all checks pass before submitting changes. The project emphasizes high quality, performance, and reliability, so these should be primary considerations in all development work.

|

||||

8

.github/workflows/codeql-analysis.yml

vendored

8

.github/workflows/codeql-analysis.yml

vendored

@@ -35,11 +35,11 @@ jobs:

|

||||

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v5

|

||||

uses: actions/checkout@v6

|

||||

|

||||

# Initializes the CodeQL tools for scanning.

|

||||

- name: Initialize CodeQL

|

||||

uses: github/codeql-action/init@v3

|

||||

uses: github/codeql-action/init@v4

|

||||

with:

|

||||

languages: ${{ matrix.language }}

|

||||

# If you wish to specify custom queries, you can do so here or in a config file.

|

||||

@@ -50,7 +50,7 @@ jobs:

|

||||

# Autobuild attempts to build any compiled languages (C/C++, C#, or Java).

|

||||

# If this step fails, then you should remove it and run the build manually (see below)

|

||||

- name: Autobuild

|

||||

uses: github/codeql-action/autobuild@v3

|

||||

uses: github/codeql-action/autobuild@v4

|

||||

|

||||

# ℹ️ Command-line programs to run using the OS shell.

|

||||

# 📚 https://git.io/JvXDl

|

||||

@@ -64,4 +64,4 @@ jobs:

|

||||

# make release

|

||||

|

||||

- name: Perform CodeQL Analysis

|

||||

uses: github/codeql-action/analyze@v3

|

||||

uses: github/codeql-action/analyze@v4

|

||||

|

||||

4

.github/workflows/go.yml

vendored

4

.github/workflows/go.yml

vendored

@@ -12,7 +12,7 @@ jobs:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Check out code into the Go module directory

|

||||

uses: actions/checkout@v5

|

||||

uses: actions/checkout@v6

|

||||

|

||||

- name: Set up Go 1.x

|

||||

uses: actions/setup-go@v6

|

||||

@@ -52,7 +52,7 @@ jobs:

|

||||

runs-on: windows-latest

|

||||

steps:

|

||||

- name: Checkout codebase

|

||||

uses: actions/checkout@v5

|

||||

uses: actions/checkout@v6

|

||||

|

||||

- name: Set up Go 1.x

|

||||

uses: actions/setup-go@v6

|

||||

|

||||

2

.github/workflows/release.yaml

vendored

2

.github/workflows/release.yaml

vendored

@@ -16,7 +16,7 @@ jobs:

|

||||

- goarch: "386"

|

||||

goos: darwin

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/checkout@v6

|

||||

- uses: zeromicro/go-zero-release-action@master

|

||||

with:

|

||||

github_token: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

7

.github/workflows/reviewdog.yml

vendored

7

.github/workflows/reviewdog.yml

vendored

@@ -5,7 +5,12 @@ jobs:

|

||||

name: runner / staticcheck

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/checkout@v6

|

||||

- uses: actions/setup-go@v6

|

||||

with:

|

||||

go-version-file: go.mod

|

||||

check-latest: true

|

||||

cache: true

|

||||

- uses: reviewdog/action-staticcheck@v1

|

||||

with:

|

||||

github_token: ${{ secrets.github_token }}

|

||||

|

||||

2

.github/workflows/version-check.yml

vendored

2

.github/workflows/version-check.yml

vendored

@@ -10,7 +10,7 @@ jobs:

|

||||

version-check:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/checkout@v6

|

||||

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v6

|

||||

|

||||

@@ -40,7 +40,7 @@ type (

|

||||

}

|

||||

)

|

||||

|

||||

// New create a Filter, store is the backed redis, key is the key for the bloom filter,

|

||||

// New creates a Filter, store is the backed redis, key is the key for the bloom filter,

|

||||

// bits is how many bits will be used, maps is how many hashes for each addition.

|

||||

// best practices:

|

||||

// elements - means how many actual elements

|

||||

|

||||

@@ -81,6 +81,10 @@ func (c *Cache) Del(key string) {

|

||||

delete(c.data, key)

|

||||

c.lruCache.remove(key)

|

||||

c.lock.Unlock()

|

||||

|

||||

// RemoveTimer is called outside the lock to avoid performance impact from this

|

||||

// potentially time-consuming operation. Data integrity is maintained by lruCache,

|

||||

// which will eventually evict any remaining entries when capacity is exceeded.

|

||||

c.timingWheel.RemoveTimer(key)

|

||||

}

|

||||

|

||||

|

||||

@@ -164,6 +164,7 @@ func (tw *TimingWheel) Stop() {

|

||||

|

||||

func (tw *TimingWheel) drainAll(fn func(key, value any)) {

|

||||

runner := threading.NewTaskRunner(drainWorkers)

|

||||

|

||||

for _, slot := range tw.slots {

|

||||

for e := slot.Front(); e != nil; {

|

||||

task := e.Value.(*timingEntry)

|

||||

@@ -177,6 +178,8 @@ func (tw *TimingWheel) drainAll(fn func(key, value any)) {

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

runner.Wait()

|

||||

}

|

||||

|

||||

func (tw *TimingWheel) getPositionAndCircle(d time.Duration) (pos, circle int) {

|

||||

|

||||

@@ -629,6 +629,157 @@ func TestMoveAndRemoveTask(t *testing.T) {

|

||||

assert.Equal(t, 0, len(keys))

|

||||

}

|

||||

|

||||

// TestTimingWheel_DrainClosureBug tests the closure capture bug in drainAll

|

||||

// Issue: https://github.com/zeromicro/go-zero/issues/5314

|

||||

func TestTimingWheel_DrainClosureBug(t *testing.T) {

|

||||

ticker := timex.NewFakeTicker()

|

||||

tw, _ := NewTimingWheelWithTicker(testStep, 10, func(k, v any) {}, ticker)

|

||||

defer tw.Stop()

|

||||

|

||||

// Set multiple timers with different values

|

||||

for i := 0; i < 10; i++ {

|

||||

tw.SetTimer(i, i*10, testStep*5)

|

||||

}

|

||||

|

||||

// Give time for timers to be set

|

||||

time.Sleep(time.Millisecond * 100)

|

||||

|

||||

var mu sync.Mutex

|

||||

received := make(map[int]int)

|

||||

var wg sync.WaitGroup

|

||||

wg.Add(10)

|

||||

|

||||

tw.Drain(func(key, value any) {

|

||||

mu.Lock()

|

||||

defer mu.Unlock()

|

||||

k := key.(int)

|

||||

v := value.(int)

|

||||

received[k] = v

|

||||

wg.Done()

|

||||

})

|

||||

|

||||

wg.Wait()

|

||||

|

||||

// Check if all values match their keys

|

||||

for k, v := range received {

|

||||

expected := k * 10

|

||||

assert.Equal(t, expected, v, "key %d should have value %d, got %d", k, expected, v)

|

||||

}

|

||||

}

|

||||

|

||||

// TestTimingWheel_RunTasksClosureBug tests the closure capture bug in runTasks

|

||||

// Issue: https://github.com/zeromicro/go-zero/issues/5314

|

||||

func TestTimingWheel_RunTasksClosureBug(t *testing.T) {

|

||||

ticker := timex.NewFakeTicker()

|

||||

var mu sync.Mutex

|

||||

executed := make(map[int]int)

|

||||

var wg sync.WaitGroup

|

||||

|

||||

tw, _ := NewTimingWheelWithTicker(testStep, 10, func(k, v any) {

|

||||

mu.Lock()

|

||||

defer mu.Unlock()

|

||||

key := k.(int)

|

||||

val := v.(int)

|

||||

executed[key] = val

|

||||

wg.Done()

|

||||

}, ticker)

|

||||

defer tw.Stop()

|

||||

|

||||

// Set multiple timers that should fire in the same tick

|

||||

count := 10

|

||||

wg.Add(count)

|

||||

for i := 0; i < count; i++ {

|

||||

tw.SetTimer(i, i*10, testStep)

|

||||

}

|

||||

|

||||

// Advance ticker to trigger tasks

|

||||

ticker.Tick()

|

||||

|

||||

// Wait for execution with timeout

|

||||

done := make(chan struct{})

|

||||

go func() {

|

||||

wg.Wait()

|

||||

close(done)

|

||||

}()

|

||||

|

||||

select {

|

||||

case <-done:

|

||||

// Success

|

||||

case <-time.After(2 * time.Second):

|

||||

t.Fatal("timeout waiting for tasks to execute")

|

||||

}

|

||||

|

||||

// Verify all tasks executed with correct values

|

||||

assert.Equal(t, count, len(executed), "should have executed all tasks")

|

||||

for k, v := range executed {

|

||||

expected := k * 10

|

||||

assert.Equal(t, expected, v, "key %d should have value %d, got %d", k, expected, v)

|

||||

}

|

||||

}

|

||||

|

||||

// TestTimingWheel_RunTasksRaceCondition tests for race conditions in runTasks

|

||||

// This test specifically targets the loop variable capture bug

|

||||

func TestTimingWheel_RunTasksRaceCondition(t *testing.T) {

|

||||

// Run multiple times to increase likelihood of catching the bug

|

||||

for attempt := 0; attempt < 10; attempt++ {

|

||||

t.Run("", func(t *testing.T) {

|

||||

ticker := timex.NewFakeTicker()

|

||||

var mu sync.Mutex

|

||||

keyValues := make(map[int][]int)

|

||||

var wg sync.WaitGroup

|

||||

|

||||

tw, _ := NewTimingWheelWithTicker(testStep, 10, func(k, v any) {

|

||||

// Add small delay to increase chance of race

|

||||

time.Sleep(time.Microsecond)

|

||||

mu.Lock()

|

||||

defer mu.Unlock()

|

||||

key := k.(int)

|

||||

val := v.(int)

|

||||

keyValues[key] = append(keyValues[key], val)

|

||||

wg.Done()

|

||||

}, ticker)

|

||||

defer tw.Stop()

|

||||

|

||||

// Set many timers rapidly to increase chance of race

|

||||

count := 50

|

||||

wg.Add(count)

|

||||

for i := 0; i < count; i++ {

|

||||

tw.SetTimer(i, i*100, testStep)

|

||||

}

|

||||

|

||||

ticker.Tick()

|

||||

|

||||

done := make(chan struct{})

|

||||

go func() {

|

||||

wg.Wait()

|

||||

close(done)

|

||||

}()

|

||||

|

||||

select {

|

||||

case <-done:

|

||||

case <-time.After(5 * time.Second):

|

||||

t.Fatal("timeout waiting for tasks")

|

||||

}

|

||||

|

||||

// Check for duplicates or wrong values

|

||||

wrongCount := 0

|

||||

for key, values := range keyValues {

|

||||

assert.Equal(t, 1, len(values), "key %d should only execute once, got %v", key, values)

|

||||

if len(values) > 0 {

|

||||

expected := key * 100

|

||||

if values[0] != expected {

|

||||

wrongCount++

|

||||

t.Logf("BUG DETECTED: key %d should have value %d, got %d", key, expected, values[0])

|

||||

}

|

||||

}

|

||||

}

|

||||

if wrongCount > 0 {

|

||||

t.Errorf("Found %d tasks with wrong values due to closure bug", wrongCount)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func BenchmarkTimingWheel(b *testing.B) {

|

||||

b.ReportAllocs()

|

||||

|

||||

|

||||

@@ -368,5 +368,5 @@ func getFullName(parent, child string) string {

|

||||

return child

|

||||

}

|

||||

|

||||

return strings.Join([]string{parent, child}, ".")

|

||||

return parent + "." + child

|

||||

}

|

||||

|

||||

@@ -1377,3 +1377,23 @@ func (m mockConfig) Validate() error {

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

func TestGetFullName(t *testing.T) {

|

||||

tests := []struct {

|

||||

parent string

|

||||

child string

|

||||

want string

|

||||

}{

|

||||

{"", "child", "child"},

|

||||

{"parent", "child", "parent.child"},

|

||||

{"a.b", "c", "a.b.c"},

|

||||

{"root", "nested.field", "root.nested.field"},

|

||||

}

|

||||

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.parent+"."+tt.child, func(t *testing.T) {

|

||||

got := getFullName(tt.parent, tt.child)

|

||||

assert.Equal(t, tt.want, got)

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

@@ -1,6 +1,9 @@

|

||||

package subscriber

|

||||

|

||||

import (

|

||||

"sync"

|

||||

"sync/atomic"

|

||||

|

||||

"github.com/zeromicro/go-zero/core/discov"

|

||||

"github.com/zeromicro/go-zero/core/logx"

|

||||

)

|

||||

@@ -37,6 +40,7 @@ func NewEtcdSubscriber(conf EtcdConf) (Subscriber, error) {

|

||||

func buildSubOptions(conf EtcdConf) []discov.SubOption {

|

||||

opts := []discov.SubOption{

|

||||

discov.WithExactMatch(),

|

||||

discov.WithContainer(newContainer()),

|

||||

}

|

||||

|

||||

if len(conf.User) > 0 {

|

||||

@@ -65,3 +69,47 @@ func (s *etcdSubscriber) Value() (string, error) {

|

||||

|

||||

return "", nil

|

||||

}

|

||||

|

||||

type container struct {

|

||||

value atomic.Value

|

||||

listeners []func()

|

||||

lock sync.Mutex

|

||||

}

|

||||

|

||||

func newContainer() *container {

|

||||

return &container{}

|

||||

}

|

||||

|

||||

func (c *container) OnAdd(kv discov.KV) {

|

||||

c.value.Store([]string{kv.Val})

|

||||

c.notifyChange()

|

||||

}

|

||||

|

||||

func (c *container) OnDelete(_ discov.KV) {

|

||||

c.value.Store([]string(nil))

|

||||

c.notifyChange()

|

||||

}

|

||||

|

||||

func (c *container) AddListener(listener func()) {

|

||||

c.lock.Lock()

|

||||

c.listeners = append(c.listeners, listener)

|

||||

c.lock.Unlock()

|

||||

}

|

||||

|

||||

func (c *container) GetValues() []string {

|

||||

if vals, ok := c.value.Load().([]string); ok {

|

||||

return vals

|

||||

}

|

||||

|

||||

return []string(nil)

|

||||

}

|

||||

|

||||

func (c *container) notifyChange() {

|

||||

c.lock.Lock()

|

||||

listeners := append(([]func())(nil), c.listeners...)

|

||||

c.lock.Unlock()

|

||||

|

||||

for _, listener := range listeners {

|

||||

listener()

|

||||

}

|

||||

}

|

||||

|

||||

186

core/configcenter/subscriber/etcd_test.go

Normal file

186

core/configcenter/subscriber/etcd_test.go

Normal file

@@ -0,0 +1,186 @@

|

||||

package subscriber

|

||||

|

||||

import (

|

||||

"testing"

|

||||

|

||||

"github.com/stretchr/testify/assert"

|

||||

"github.com/zeromicro/go-zero/core/discov"

|

||||

)

|

||||

|

||||

const (

|

||||

actionAdd = iota

|

||||

actionDel

|

||||

)

|

||||

|

||||

func TestConfigCenterContainer(t *testing.T) {

|

||||

type action struct {

|

||||

act int

|

||||

key string

|

||||

val string

|

||||

}

|

||||

tests := []struct {

|

||||

name string

|

||||

do []action

|

||||

expect []string

|

||||

}{

|

||||

{

|

||||

name: "add one",

|

||||

do: []action{

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "first",

|

||||

val: "a",

|

||||

},

|

||||

},

|

||||

expect: []string{

|

||||

"a",

|

||||

},

|

||||

},

|

||||

{

|

||||

name: "add two",

|

||||

do: []action{

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "first",

|

||||

val: "a",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "second",

|

||||

val: "b",

|

||||

},

|

||||

},

|

||||

expect: []string{

|

||||

"b",

|

||||

},

|

||||

},

|

||||

{

|

||||

name: "add two, delete one",

|

||||

do: []action{

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "first",

|

||||

val: "a",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "second",

|

||||

val: "b",

|

||||

},

|

||||

{

|

||||

act: actionDel,

|

||||

key: "first",

|

||||

},

|

||||

},

|

||||

expect: []string(nil),

|

||||

},

|

||||

{

|

||||

name: "add two, delete two",

|

||||

do: []action{

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "first",

|

||||

val: "a",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "second",

|

||||

val: "b",

|

||||

},

|

||||

{

|

||||

act: actionDel,

|

||||

key: "first",

|

||||

},

|

||||

{

|

||||

act: actionDel,

|

||||

key: "second",

|

||||

},

|

||||

},

|

||||

expect: []string(nil),

|

||||

},

|

||||

{

|

||||

name: "add two, dup values",

|

||||

do: []action{

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "first",

|

||||

val: "a",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "second",

|

||||

val: "b",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "third",

|

||||

val: "a",

|

||||

},

|

||||

},

|

||||

expect: []string{"a"},

|

||||

},

|

||||

{

|

||||

name: "add three, dup values, delete two, add one",

|

||||

do: []action{

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "first",

|

||||

val: "a",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "second",

|

||||

val: "b",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "third",

|

||||

val: "a",

|

||||

},

|

||||

{

|

||||

act: actionDel,

|

||||

key: "first",

|

||||

},

|

||||

{

|

||||

act: actionDel,

|

||||

key: "second",

|

||||

},

|

||||

{

|

||||

act: actionAdd,

|

||||

key: "forth",

|

||||

val: "c",

|

||||

},

|

||||

},

|

||||

expect: []string{"c"},

|

||||

},

|

||||

}

|

||||

|

||||

for _, test := range tests {

|

||||

t.Run(test.name, func(t *testing.T) {

|

||||

var changed bool

|

||||

c := newContainer()

|

||||

c.AddListener(func() {

|

||||

changed = true

|

||||

})

|

||||

assert.Nil(t, c.GetValues())

|

||||

assert.False(t, changed)

|

||||

|

||||

for _, order := range test.do {

|

||||

if order.act == actionAdd {

|

||||

c.OnAdd(discov.KV{

|

||||

Key: order.key,

|

||||

Val: order.val,

|

||||

})

|

||||

} else {

|

||||

c.OnDelete(discov.KV{

|

||||

Key: order.key,

|

||||

Val: order.val,

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

assert.True(t, changed)

|

||||

assert.ElementsMatch(t, test.expect, c.GetValues())

|

||||

})

|

||||

}

|

||||

}

|

||||

@@ -386,8 +386,9 @@ func (c *cluster) watch(cli EtcdClient, key watchKey, rev int64) {

|

||||

rev = c.load(cli, key)

|

||||

}

|

||||

|

||||

// log the error and retry

|

||||

// log the error and retry with cooldown to prevent CPU/disk exhaustion

|

||||

logc.Error(cli.Ctx(), err)

|

||||

time.Sleep(coolDownUnstable.AroundDuration(coolDownInterval))

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

@@ -19,8 +19,9 @@ type (

|

||||

exclusive bool

|

||||

key string

|

||||

exactMatch bool

|

||||

items *container

|

||||

items Container

|

||||

}

|

||||

KV = internal.KV

|

||||

)

|

||||

|

||||

// NewSubscriber returns a Subscriber.

|

||||

@@ -35,7 +36,9 @@ func NewSubscriber(endpoints []string, key string, opts ...SubOption) (*Subscrib

|

||||

for _, opt := range opts {

|

||||

opt(sub)

|

||||

}

|

||||

sub.items = newContainer(sub.exclusive)

|

||||

if sub.items == nil {

|

||||

sub.items = newContainer(sub.exclusive)

|

||||

}

|

||||

|

||||

if err := internal.GetRegistry().Monitor(endpoints, key, sub.exactMatch, sub.items); err != nil {

|

||||

return nil, err

|

||||

@@ -46,7 +49,7 @@ func NewSubscriber(endpoints []string, key string, opts ...SubOption) (*Subscrib

|

||||

|

||||

// AddListener adds listener to s.

|

||||

func (s *Subscriber) AddListener(listener func()) {

|

||||

s.items.addListener(listener)

|

||||

s.items.AddListener(listener)

|

||||

}

|

||||

|

||||

// Close closes the subscriber.

|

||||

@@ -56,7 +59,7 @@ func (s *Subscriber) Close() {

|

||||

|

||||

// Values returns all the subscription values.

|

||||

func (s *Subscriber) Values() []string {

|

||||

return s.items.getValues()

|

||||

return s.items.GetValues()

|

||||

}

|

||||

|

||||

// Exclusive means that key value can only be 1:1,

|

||||

@@ -88,16 +91,32 @@ func WithSubEtcdTLS(certFile, certKeyFile, caFile string, insecureSkipVerify boo

|

||||

}

|

||||

}

|

||||

|

||||

type container struct {

|

||||

exclusive bool

|

||||

values map[string][]string

|

||||

mapping map[string]string

|

||||

snapshot atomic.Value

|

||||

dirty *syncx.AtomicBool

|

||||

listeners []func()

|

||||

lock sync.Mutex

|

||||

// WithContainer provides a custom container to the subscriber.

|

||||

func WithContainer(container Container) SubOption {

|

||||

return func(sub *Subscriber) {

|

||||

sub.items = container

|

||||

}

|

||||

}

|

||||

|

||||

type (

|

||||

Container interface {

|

||||

OnAdd(kv internal.KV)

|

||||

OnDelete(kv internal.KV)

|

||||

AddListener(listener func())

|

||||

GetValues() []string

|

||||

}

|

||||

|

||||

container struct {

|

||||

exclusive bool

|

||||

values map[string][]string

|

||||

mapping map[string]string

|

||||

snapshot atomic.Value

|

||||

dirty *syncx.AtomicBool

|

||||

listeners []func()

|

||||

lock sync.Mutex

|

||||

}

|

||||

)

|

||||

|

||||

func newContainer(exclusive bool) *container {

|

||||

return &container{

|

||||

exclusive: exclusive,

|

||||

@@ -141,7 +160,7 @@ func (c *container) addKv(key, value string) ([]string, bool) {

|

||||

return nil, false

|

||||

}

|

||||

|

||||

func (c *container) addListener(listener func()) {

|

||||

func (c *container) AddListener(listener func()) {

|

||||

c.lock.Lock()

|

||||

c.listeners = append(c.listeners, listener)

|

||||

c.lock.Unlock()

|

||||

@@ -170,7 +189,7 @@ func (c *container) doRemoveKey(key string) {

|

||||

}

|

||||

}

|

||||

|

||||

func (c *container) getValues() []string {

|

||||

func (c *container) GetValues() []string {

|

||||

if !c.dirty.True() {

|

||||

return c.snapshot.Load().([]string)

|

||||

}

|

||||

|

||||

@@ -171,10 +171,10 @@ func TestContainer(t *testing.T) {

|

||||

t.Run(test.name, func(t *testing.T) {

|

||||

var changed bool

|

||||

c := newContainer(exclusive)

|

||||

c.addListener(func() {

|

||||

c.AddListener(func() {

|

||||

changed = true

|

||||

})

|

||||

assert.Nil(t, c.getValues())

|

||||

assert.Nil(t, c.GetValues())

|

||||

assert.False(t, changed)

|

||||

|

||||

for _, order := range test.do {

|

||||

@@ -193,9 +193,9 @@ func TestContainer(t *testing.T) {

|

||||

|

||||

assert.True(t, changed)

|

||||

assert.True(t, c.dirty.True())

|

||||

assert.ElementsMatch(t, test.expect, c.getValues())

|

||||

assert.ElementsMatch(t, test.expect, c.GetValues())

|

||||

assert.False(t, c.dirty.True())

|

||||

assert.ElementsMatch(t, test.expect, c.getValues())

|

||||

assert.ElementsMatch(t, test.expect, c.GetValues())

|

||||

})

|

||||

}

|

||||

}

|

||||

@@ -204,12 +204,14 @@ func TestContainer(t *testing.T) {

|

||||

func TestSubscriber(t *testing.T) {

|

||||

sub := new(Subscriber)

|

||||

Exclusive()(sub)

|

||||

sub.items = newContainer(sub.exclusive)

|

||||

c := newContainer(sub.exclusive)

|

||||

WithContainer(c)(sub)

|

||||

sub.items = c

|

||||

var count int32

|

||||

sub.AddListener(func() {

|

||||

atomic.AddInt32(&count, 1)

|

||||

})

|

||||

sub.items.notifyChange()

|

||||

c.notifyChange()

|

||||

assert.Empty(t, sub.Values())

|

||||

assert.Equal(t, int32(1), atomic.LoadInt32(&count))

|

||||

}

|

||||

@@ -229,12 +231,13 @@ func TestWithSubEtcdAccount(t *testing.T) {

|

||||

func TestWithExactMatch(t *testing.T) {

|

||||

sub := new(Subscriber)

|

||||

WithExactMatch()(sub)

|

||||

sub.items = newContainer(sub.exclusive)

|

||||

c := newContainer(sub.exclusive)

|

||||

sub.items = c

|

||||

var count int32

|

||||

sub.AddListener(func() {

|

||||

atomic.AddInt32(&count, 1)

|

||||

})

|

||||

sub.items.notifyChange()

|

||||

c.notifyChange()

|

||||

assert.Empty(t, sub.Values())

|

||||

assert.Equal(t, int32(1), atomic.LoadInt32(&count))

|

||||

}

|

||||

|

||||

@@ -168,7 +168,7 @@ func (s Stream) Count() (count int) {

|

||||

return

|

||||

}

|

||||

|

||||

// Distinct removes the duplicated items base on the given KeyFunc.

|

||||

// Distinct removes the duplicated items based on the given KeyFunc.

|

||||

func (s Stream) Distinct(fn KeyFunc) Stream {

|

||||

source := make(chan any)

|

||||

|

||||

@@ -459,7 +459,7 @@ func (s Stream) Tail(n int64) Stream {

|

||||

return Range(source)

|

||||

}

|

||||

|

||||

// Walk lets the callers handle each item, the caller may write zero, one or more items base on the given item.

|

||||

// Walk lets the callers handle each item, the caller may write zero, one or more items based on the given item.

|

||||

func (s Stream) Walk(fn WalkFunc, opts ...Option) Stream {

|

||||

option := buildOptions(opts...)

|

||||

if option.unlimitedWorkers {

|

||||

|

||||

@@ -1,8 +1,6 @@

|

||||

package fx

|

||||

|

||||

import (

|

||||

"io"

|

||||

"log"

|

||||

"math/rand"

|

||||

"reflect"

|

||||

"runtime"

|

||||

@@ -13,6 +11,7 @@ import (

|

||||

"time"

|

||||

|

||||

"github.com/stretchr/testify/assert"

|

||||

"github.com/zeromicro/go-zero/core/logx/logtest"

|

||||

"github.com/zeromicro/go-zero/core/stringx"

|

||||

"go.uber.org/goleak"

|

||||

)

|

||||

@@ -238,7 +237,7 @@ func TestLast(t *testing.T) {

|

||||

|

||||

func TestMap(t *testing.T) {

|

||||

runCheckedTest(t, func(t *testing.T) {

|

||||

log.SetOutput(io.Discard)

|

||||

logtest.Discard(t)

|

||||

|

||||

tests := []struct {

|

||||

mapper MapFunc

|

||||

|

||||

@@ -96,7 +96,7 @@ func (h *ConsistentHash) AddWithWeight(node any, weight int) {

|

||||

h.AddWithReplicas(node, replicas)

|

||||

}

|

||||

|

||||

// Get returns the corresponding node from h base on the given v.

|

||||

// Get returns the corresponding node from h based on the given v.

|

||||

func (h *ConsistentHash) Get(v any) (any, bool) {

|

||||

h.lock.RLock()

|

||||

defer h.lock.RUnlock()

|

||||

|

||||

@@ -66,7 +66,7 @@ type (

|

||||

gzip bool

|

||||

}

|

||||

|

||||

// SizeLimitRotateRule a rotation rule that make the log file rotated base on size

|

||||

// SizeLimitRotateRule a rotation rule that makes the log file rotated based on size

|

||||

SizeLimitRotateRule struct {

|

||||

DailyRotateRule

|

||||

maxSize int64

|

||||

|

||||

@@ -444,6 +444,8 @@ func wrapLevelWithColor(level string) string {

|

||||

colour = color.FgRed

|

||||

case levelError:

|

||||

colour = color.FgRed

|

||||

case levelSevere:

|

||||

colour = color.FgRed

|

||||

case levelFatal:

|

||||

colour = color.FgRed

|

||||

case levelInfo:

|

||||

|

||||

@@ -104,14 +104,13 @@ func convertToString(val any, fullName string) (string, error) {

|

||||

func convertTypeFromString(kind reflect.Kind, str string) (any, error) {

|

||||

switch kind {

|

||||

case reflect.Bool:

|

||||

switch strings.ToLower(str) {

|

||||

case "1", "true":

|

||||

if str == "1" || strings.EqualFold(str, "true") {

|

||||

return true, nil

|

||||

case "0", "false":

|

||||

return false, nil

|

||||

default:

|

||||

return false, errTypeMismatch

|

||||

}

|

||||

if str == "0" || strings.EqualFold(str, "false") {

|

||||

return false, nil

|

||||

}

|

||||

return false, errTypeMismatch

|

||||

case reflect.Int:

|

||||

return strconv.ParseInt(str, 10, intSize)

|

||||

case reflect.Int8:

|

||||

|

||||

@@ -334,3 +334,43 @@ func TestValidateValueRange(t *testing.T) {

|

||||

func TestSetMatchedPrimitiveValue(t *testing.T) {

|

||||

assert.Error(t, setMatchedPrimitiveValue(reflect.Func, reflect.ValueOf(2), "1"))

|

||||

}

|

||||

|

||||

func TestConvertTypeFromString_Bool(t *testing.T) {

|

||||

tests := []struct {

|

||||

name string

|

||||

input string

|

||||

want bool

|

||||

wantErr bool

|

||||

}{

|

||||

// true cases

|

||||

{name: "1", input: "1", want: true, wantErr: false},

|

||||

{name: "true lowercase", input: "true", want: true, wantErr: false},

|

||||

{name: "True mixed", input: "True", want: true, wantErr: false},

|

||||

{name: "TRUE uppercase", input: "TRUE", want: true, wantErr: false},

|

||||

{name: "TrUe mixed", input: "TrUe", want: true, wantErr: false},

|

||||

// false cases

|

||||

{name: "0", input: "0", want: false, wantErr: false},

|

||||

{name: "false lowercase", input: "false", want: false, wantErr: false},

|

||||

{name: "False mixed", input: "False", want: false, wantErr: false},

|

||||

{name: "FALSE uppercase", input: "FALSE", want: false, wantErr: false},

|

||||

{name: "FaLsE mixed", input: "FaLsE", want: false, wantErr: false},

|

||||

// error cases

|

||||

{name: "invalid yes", input: "yes", want: false, wantErr: true},

|

||||

{name: "invalid no", input: "no", want: false, wantErr: true},

|

||||

{name: "invalid empty", input: "", want: false, wantErr: true},

|

||||

{name: "invalid 2", input: "2", want: false, wantErr: true},

|

||||

{name: "invalid truee", input: "truee", want: false, wantErr: true},

|

||||

}

|

||||

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

got, err := convertTypeFromString(reflect.Bool, tt.input)

|

||||

if tt.wantErr {

|

||||

assert.Error(t, err)

|

||||

} else {

|

||||

assert.NoError(t, err)

|

||||

assert.Equal(t, tt.want, got)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

@@ -6,7 +6,7 @@ import (

|

||||

"time"

|

||||

)

|

||||

|

||||

// An Unstable is used to generate random value around the mean value base on given deviation.

|

||||

// An Unstable is used to generate random value around the mean value based on given deviation.

|

||||

type Unstable struct {

|

||||

deviation float64

|

||||

r *rand.Rand

|

||||

|

||||

@@ -4,8 +4,6 @@ import (

|

||||

"context"

|

||||

"errors"

|

||||

"fmt"

|

||||

"io"

|

||||

"log"

|

||||

"runtime"

|

||||

"sync/atomic"

|

||||

"testing"

|

||||

@@ -17,9 +15,6 @@ import (

|

||||

|

||||

var errDummy = errors.New("dummy")

|

||||

|

||||

func init() {

|

||||

log.SetOutput(io.Discard)

|

||||

}

|

||||

|

||||

func TestFinish(t *testing.T) {

|

||||

defer goleak.VerifyNone(t)

|

||||

|

||||

@@ -532,7 +532,7 @@ func createModel(t *testing.T, coll mon.Collection) *Model {

|

||||

}

|

||||

}

|

||||

|

||||

// mustNewTestModel returns a test Model with the given cache.

|

||||

// mustNewTestModel returns a test Model with the given cache.

|

||||

func mustNewTestModel(collection mon.Collection, c cache.CacheConf, opts ...cache.Option) *Model {

|

||||

return &Model{

|

||||

Model: &mon.Model{

|

||||

|

||||

@@ -259,12 +259,34 @@ func (s *Redis) BitPosCtx(ctx context.Context, key string, bit, start, end int64

|

||||

}

|

||||

|

||||

// Blpop uses passed in redis connection to execute blocking queries.

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode to avoid

|

||||

// exhausting the connection pool. Blocking commands hold connections for extended periods and should

|

||||

// not share the regular connection pool.

|

||||

//

|

||||

// Example usage:

|

||||

//

|

||||

// node, err := redis.CreateBlockingNode(rds)

|

||||

// if err != nil {

|

||||

// // handle error

|

||||

// }

|

||||

// defer node.Close()

|

||||

//

|

||||

// value, err := rds.Blpop(node, "mylist")

|

||||

// if err != nil {

|

||||

// // handle error

|

||||

// }

|

||||

//

|

||||

// Doesn't benefit from pooling redis connections of blocking queries

|

||||

func (s *Redis) Blpop(node RedisNode, key string) (string, error) {

|

||||

return s.BlpopCtx(context.Background(), node, key)

|

||||

}

|

||||

|

||||

// BlpopCtx uses passed in redis connection to execute blocking queries.

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode.

|

||||

// See Blpop for usage examples.

|

||||

//

|

||||

// Doesn't benefit from pooling redis connections of blocking queries

|

||||

func (s *Redis) BlpopCtx(ctx context.Context, node RedisNode, key string) (string, error) {

|

||||

return s.BlpopWithTimeoutCtx(ctx, node, blockingQueryTimeout, key)

|

||||

@@ -272,12 +294,18 @@ func (s *Redis) BlpopCtx(ctx context.Context, node RedisNode, key string) (strin

|

||||

|

||||

// BlpopEx uses passed in redis connection to execute blpop command.

|

||||

// The difference against Blpop is that this method returns a bool to indicate success.

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode.

|

||||

// See Blpop for usage examples.

|

||||

func (s *Redis) BlpopEx(node RedisNode, key string) (string, bool, error) {

|

||||

return s.BlpopExCtx(context.Background(), node, key)

|

||||

}

|

||||

|

||||

// BlpopExCtx uses passed in redis connection to execute blpop command.

|

||||

// The difference against Blpop is that this method returns a bool to indicate success.

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode.

|

||||

// See Blpop for usage examples.

|

||||

func (s *Redis) BlpopExCtx(ctx context.Context, node RedisNode, key string) (string, bool, error) {

|

||||

if node == nil {

|

||||

return "", false, ErrNilNode

|

||||

@@ -297,12 +325,18 @@ func (s *Redis) BlpopExCtx(ctx context.Context, node RedisNode, key string) (str

|

||||

|

||||

// BlpopWithTimeout uses passed in redis connection to execute blpop command.

|

||||

// Control blocking query timeout

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode.

|

||||

// See Blpop for usage examples.

|

||||

func (s *Redis) BlpopWithTimeout(node RedisNode, timeout time.Duration, key string) (string, error) {

|

||||

return s.BlpopWithTimeoutCtx(context.Background(), node, timeout, key)

|

||||

}

|

||||

|

||||

// BlpopWithTimeoutCtx uses passed in redis connection to execute blpop command.

|

||||

// Control blocking query timeout

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode.

|

||||

// See Blpop for usage examples.

|

||||

func (s *Redis) BlpopWithTimeoutCtx(ctx context.Context, node RedisNode, timeout time.Duration,

|

||||

key string) (string, error) {

|

||||

if node == nil {

|

||||

@@ -630,6 +664,28 @@ func (s *Redis) GetDelCtx(ctx context.Context, key string) (string, error) {

|

||||

return val, err

|

||||

}

|

||||

|

||||

// GetEx is the implementation of redis getex command.

|

||||

// Available since: redis version 6.2.0

|

||||

func (s *Redis) GetEx(key string, seconds int) (string, error) {

|

||||

return s.GetExCtx(context.Background(), key, seconds)

|

||||

}

|

||||

|

||||

// GetExCtx is the implementation of redis getex command.

|

||||

// Available since: redis version 6.2.0

|

||||

func (s *Redis) GetExCtx(ctx context.Context, key string, seconds int) (string, error) {

|

||||

conn, err := getRedis(s)

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

|

||||

val, err := conn.GetEx(ctx, key, time.Duration(seconds)*time.Second).Result()

|

||||

if errors.Is(err, red.Nil) {

|

||||

return "", nil

|

||||

}

|

||||

|

||||

return val, err

|

||||

}

|

||||

|

||||

// GetSet is the implementation of redis getset command.

|

||||

func (s *Redis) GetSet(key, value string) (string, error) {

|

||||

return s.GetSetCtx(context.Background(), key, value)

|

||||

@@ -1840,6 +1896,29 @@ func (s *Redis) XInfoStreamCtx(ctx context.Context, stream string) (*red.XInfoSt

|

||||

|

||||

// XReadGroup reads messages from Redis streams as part of a consumer group.

|

||||

// It allows for distributed processing of stream messages with automatic message delivery semantics.

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode to avoid

|

||||

// exhausting the connection pool. Blocking commands hold connections for extended periods and should

|

||||

// not share the regular connection pool.

|

||||

//

|

||||

// Example usage:

|

||||

//

|

||||

// node, err := redis.CreateBlockingNode(rds)

|

||||

// if err != nil {

|

||||

// // handle error

|

||||

// }

|

||||

// defer node.Close()

|

||||

//

|

||||

// streams, err := rds.XReadGroup(

|

||||

// node, // RedisNode created with CreateBlockingNode

|

||||

// "mygroup", // consumer group name

|

||||

// "consumer1", // consumer ID

|

||||

// 10, // max number of messages to read

|

||||

// 5*time.Second, // block duration

|

||||

// false, // noAck flag

|

||||

// "mystream", // stream name

|

||||

// )

|

||||

//

|

||||

// Doesn't benefit from pooling redis connections of blocking queries.

|

||||

func (s *Redis) XReadGroup(node RedisNode, group string, consumerId string, count int64,

|

||||

block time.Duration, noAck bool, streams ...string) ([]red.XStream, error) {

|

||||

@@ -1847,6 +1926,10 @@ func (s *Redis) XReadGroup(node RedisNode, group string, consumerId string, coun

|

||||

}

|

||||

|

||||

// XReadGroupCtx is the context-aware version of XReadGroup.

|

||||

//

|

||||

// For blocking operations, you must create a dedicated RedisNode using CreateBlockingNode to avoid

|

||||

// exhausting the connection pool. See XReadGroup for usage examples.

|

||||

//

|

||||

// Doesn't benefit from pooling redis connections of blocking queries.

|

||||

func (s *Redis) XReadGroupCtx(ctx context.Context, node RedisNode, group string, consumerId string,

|

||||

count int64, block time.Duration, noAck bool, streams ...string) ([]red.XStream, error) {

|

||||

|

||||

@@ -1104,6 +1104,45 @@ func TestRedis_GetDel(t *testing.T) {

|

||||

})

|

||||

}

|

||||

|

||||

func TestRedis_GetEx(t *testing.T) {

|

||||

t.Run("get_ex", func(t *testing.T) {

|

||||

runOnRedis(t, func(client *Redis) {

|

||||

val, err := client.GetEx("getex_key", 10)

|

||||

assert.Equal(t, "", val)

|

||||

assert.Nil(t, err)

|

||||

|

||||

err = client.Set("getex_key", "getex_value")

|

||||

assert.Nil(t, err)

|

||||

|

||||

val, err = client.GetEx("getex_key", 10)

|

||||

assert.Nil(t, err)

|

||||

assert.Equal(t, "getex_value", val)

|

||||

val, err = client.Get("getex_key")

|

||||

assert.Nil(t, err)

|

||||

assert.Equal(t, "getex_value", val)

|

||||

|

||||

ttl, err := client.Ttl("getex_key")

|

||||

assert.Nil(t, err)

|

||||

assert.True(t, ttl > 0 && ttl <= 10)

|

||||

|

||||

val, err = client.GetEx("getex_key", 5)

|

||||

assert.Nil(t, err)

|

||||

assert.Equal(t, "getex_value", val)

|

||||

|

||||

ttl, err = client.Ttl("getex_key")

|

||||

assert.Nil(t, err)

|

||||

assert.True(t, ttl > 0 && ttl <= 5)

|

||||

})

|

||||

})

|

||||

|

||||

t.Run("get_ex_with_error", func(t *testing.T) {

|

||||

runOnRedisWithError(t, func(client *Redis) {

|

||||

_, err := newRedis(client.Addr, badType()).GetEx("hello", 10)

|

||||

assert.Error(t, err)

|

||||

})

|

||||

})

|

||||

}

|

||||

|

||||

func TestRedis_GetSet(t *testing.T) {

|

||||

t.Run("set_get", func(t *testing.T) {

|

||||

runOnRedis(t, func(client *Redis) {

|

||||

|

||||

@@ -13,7 +13,37 @@ type ClosableNode interface {

|

||||

Close()

|

||||

}

|

||||

|

||||

// CreateBlockingNode returns a ClosableNode.

|

||||

// CreateBlockingNode creates a dedicated RedisNode for blocking operations.

|

||||

//

|

||||

// Blocking Redis commands (like BLPOP, BRPOP, XREADGROUP with block parameter) hold connections

|

||||

// for extended periods while waiting for data. Using them with the regular Redis connection pool

|

||||

// can exhaust all available connections, causing other operations to fail or timeout.

|

||||

//

|

||||

// CreateBlockingNode creates a separate Redis client with a minimal connection pool (size 1) that

|

||||

// is dedicated to blocking operations. This ensures blocking commands don't interfere with regular

|

||||

// Redis operations.

|

||||

//

|

||||

// Example usage:

|

||||

//

|

||||

// rds := redis.MustNewRedis(redis.RedisConf{

|

||||

// Host: "localhost:6379",

|

||||

// Type: redis.NodeType,

|

||||

// })

|

||||

//

|

||||

// // Create a dedicated node for blocking operations

|

||||

// node, err := redis.CreateBlockingNode(rds)

|

||||

// if err != nil {

|

||||

// // handle error

|

||||

// }

|

||||

// defer node.Close() // Important: close the node when done

|

||||

//

|

||||

// // Use the node for blocking operations

|

||||

// value, err := rds.Blpop(node, "mylist")

|

||||

// if err != nil {

|

||||

// // handle error

|

||||

// }

|

||||

//

|

||||

// The returned ClosableNode must be closed when no longer needed to release resources.

|

||||

func CreateBlockingNode(r *Redis) (ClosableNode, error) {

|

||||

timeout := readWriteTimeout + blockingQueryTimeout

|

||||

|

||||

|

||||

@@ -70,25 +70,16 @@ func getTaggedFieldValueMap(v reflect.Value) (map[string]any, error) {

|

||||

}

|

||||

|

||||

func getValueInterface(value reflect.Value) (any, error) {

|

||||

switch value.Kind() {

|

||||

case reflect.Ptr:

|

||||

if !value.CanInterface() {

|

||||

return nil, ErrNotReadableValue

|

||||

}

|

||||

|

||||

if value.IsNil() {

|

||||

baseValueType := mapping.Deref(value.Type())

|

||||

value.Set(reflect.New(baseValueType))

|

||||

}

|

||||

|

||||

return value.Interface(), nil

|

||||

default:

|

||||

if !value.CanAddr() || !value.Addr().CanInterface() {

|

||||

return nil, ErrNotReadableValue

|

||||

}

|

||||

|

||||

return value.Addr().Interface(), nil

|

||||

if !value.CanAddr() || !value.Addr().CanInterface() {

|

||||

return nil, ErrNotReadableValue

|

||||

}

|

||||

|

||||

if value.Kind() == reflect.Pointer && value.IsNil() {

|

||||

baseValueType := mapping.Deref(value.Type())

|

||||

value.Set(reflect.New(baseValueType))

|

||||

}

|

||||

|

||||

return value.Addr().Interface(), nil

|

||||

}

|

||||

|

||||

func isScanFailed(err error) bool {

|

||||

|

||||

@@ -4,7 +4,9 @@ import (

|

||||

"context"

|

||||

"database/sql"

|

||||

"errors"

|

||||

"reflect"

|

||||

"testing"

|

||||

"time"

|

||||

|

||||

"github.com/DATA-DOG/go-sqlmock"

|

||||

"github.com/stretchr/testify/assert"

|

||||

@@ -1575,6 +1577,782 @@ func TestAnonymousStructPrError(t *testing.T) {

|

||||

})

|

||||

}

|

||||

|

||||

func TestUnmarshalRowsZeroValueStructPtr(t *testing.T) {

|

||||

secondNamePtr := "second_ptr"

|

||||

secondAgePtr := int64(30)

|

||||

thirdNamePtr := "third_ptr"

|

||||

thirdAgePtr := int64(0)

|

||||

|

||||

expect := []struct {

|

||||

Name string

|

||||

NamePtr *string

|

||||

Age int64

|

||||

AgePtr *int64

|

||||

}{

|

||||

{

|

||||

Name: "first",

|

||||

NamePtr: nil,

|

||||

Age: 2,

|

||||

AgePtr: nil,

|

||||

},

|

||||

{

|

||||

Name: "second",

|

||||

NamePtr: &secondNamePtr,

|

||||

Age: 3,

|

||||

AgePtr: &secondAgePtr,

|

||||

},

|

||||

{

|

||||

Name: "",

|

||||

NamePtr: &thirdNamePtr,

|

||||

Age: 0,

|

||||

AgePtr: &thirdAgePtr,

|

||||

},

|

||||

}

|

||||

|

||||

var value []struct {

|

||||

Age int64 `db:"age"`

|

||||

AgePtr *int64 `db:"age_ptr"`

|

||||

Name string `db:"name"`

|

||||

NamePtr *string `db:"name_ptr"`

|

||||

}

|

||||

|

||||

dbtest.RunTest(t, func(db *sql.DB, mock sqlmock.Sqlmock) {

|

||||

rs := sqlmock.NewRows([]string{"name", "name_ptr", "age", "age_ptr"}).

|

||||

AddRow("first", nil, 2, nil).

|

||||

AddRow("second", "second_ptr", 3, 30).

|

||||

AddRow("", "third_ptr", 0, 0)

|

||||

|

||||

mock.ExpectQuery("select (.+) from users where user=?").

|

||||

WithArgs("anyone").WillReturnRows(rs)

|

||||

|

||||

assert.Nil(t, query(context.Background(), db, func(rows *sql.Rows) error {

|

||||

return unmarshalRows(&value, rows, true)

|

||||

}, "select name, name_ptr, age, age_ptr from users where user=?", "anyone"))

|

||||

|

||||

assert.Equal(t, 3, len(value), "应该返回3行数据")

|

||||

|

||||

for i, each := range expect {

|

||||

|

||||

assert.Equal(t, each.Name, value[i].Name)

|

||||

assert.Equal(t, each.Age, value[i].Age)

|

||||

|

||||

if each.NamePtr == nil {

|

||||

assert.Nil(t, value[i].NamePtr)

|

||||

} else {

|

||||

assert.NotNil(t, value[i].NamePtr)

|

||||

assert.Equal(t, *each.NamePtr, *value[i].NamePtr)

|

||||

}

|

||||

|

||||

if each.AgePtr == nil {

|

||||

assert.Nil(t, value[i].AgePtr)

|

||||

} else {

|

||||

assert.NotNil(t, value[i].AgePtr)

|

||||

assert.Equal(t, *each.AgePtr, *value[i].AgePtr)

|

||||

}

|

||||

}

|

||||

})

|

||||

}

|

||||

|

||||

func TestUnmarshalRowsAllNullStructPtrFields(t *testing.T) {

|

||||

expect := []struct {

|

||||

NamePtr *string

|

||||

AgePtr *int64

|

||||

}{

|

||||

{

|

||||

NamePtr: nil,

|

||||

AgePtr: nil,

|

||||

},

|

||||

{

|

||||

NamePtr: stringPtr("second"),

|

||||

AgePtr: int64Ptr(30),

|

||||

},

|

||||

{

|

||||

NamePtr: nil,