mirror of

https://github.com/zeromicro/go-zero.git

synced 2026-05-12 01:10:00 +08:00

Compare commits

142 Commits

tools/goct

...

master

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

3738be1945 | ||

|

|

5b74b9ab7b | ||

|

|

4a67261b7b | ||

|

|

22bdae0787 | ||

|

|

e8675d6a9a | ||

|

|

e441c44975 | ||

|

|

3f91a79a2b | ||

|

|

8c47c01739 | ||

|

|

f59a1cb0de | ||

|

|

d44ff6ddc8 | ||

|

|

6ffa9cabec | ||

|

|

0069721586 | ||

|

|

ba9c275853 | ||

|

|

9a6447ab5c | ||

|

|

004995f06a | ||

|

|

c12c82b2f6 | ||

|

|

85d770d340 | ||

|

|

8cd7f7a2d8 | ||

|

|

db3101361b | ||

|

|

eb2302b71e | ||

|

|

04ed637366 | ||

|

|

567087a715 | ||

|

|

4d2e64a417 | ||

|

|

b01831b4c5 | ||

|

|

d1a014955c | ||

|

|

ec802e25a6 | ||

|

|

8a2e09dfd1 | ||

|

|

220d438fe7 | ||

|

|

2cd96146fa | ||

|

|

7e96317fad | ||

|

|

70728ce2e2 | ||

|

|

6a72a735d4 | ||

|

|

b139a82c2e | ||

|

|

bdddf1f30c | ||

|

|

9b74b7e09e | ||

|

|

4d5ed2c45d | ||

|

|

a2310bf9d7 | ||

|

|

be846eba01 | ||

|

|

b20f0e3d60 | ||

|

|

e2bb65d43c | ||

|

|

94e2f5bd12 | ||

|

|

173f76acf9 | ||

|

|

6e1af75635 | ||

|

|

84ff755e61 | ||

|

|

4b9d23aef5 | ||

|

|

97b9aebe99 | ||

|

|

8e7e5695eb | ||

|

|

4b4751e76c | ||

|

|

fcec494ea8 | ||

|

|

42117c2dcc | ||

|

|

4b631f3785 | ||

|

|

f29c8612e8 | ||

|

|

35ba024103 | ||

|

|

52df1c532a | ||

|

|

39729f3756 | ||

|

|

5c9ea81db2 | ||

|

|

b284664de4 | ||

|

|

1b76885040 | ||

|

|

eef217522b | ||

|

|

6bd0d169d5 | ||

|

|

3d291328d8 | ||

|

|

858f8ca82e | ||

|

|

4ff3975c5a | ||

|

|

7b23f73268 | ||

|

|

918a7be698 | ||

|

|

0a724447cd | ||

|

|

9e425893a7 | ||

|

|

4de13b6cc8 | ||

|

|

c6f75532fa | ||

|

|

fdf4ccf057 | ||

|

|

b333ed245b | ||

|

|

8f1576df36 | ||

|

|

72dd970969 | ||

|

|

29b65e12c1 | ||

|

|

577a611dc3 | ||

|

|

75941aedd4 | ||

|

|

c7065171d7 | ||

|

|

052de3b552 | ||

|

|

866613af8c | ||

|

|

3d4f6a5e16 | ||

|

|

d1d47d02d5 | ||

|

|

d6c876860b | ||

|

|

98423ca948 | ||

|

|

4e52d77ad8 | ||

|

|

1fc2cfb859 | ||

|

|

942cdae41d | ||

|

|

e9c3607bc6 | ||

|

|

d1603e9166 | ||

|

|

e30317e9c4 | ||

|

|

568f9ce007 | ||

|

|

dcb309065a | ||

|

|

bf8e17a686 | ||

|

|

b2ebbfce62 | ||

|

|

2b10a6a223 | ||

|

|

80c320b46e | ||

|

|

bea9d150a1 | ||

|

|

3f756a2cbf | ||

|

|

bbe5bbb0c0 | ||

|

|

5ad2278a69 | ||

|

|

77763fe748 | ||

|

|

538c4fb5c7 | ||

|

|

315fb2fe0a | ||

|

|

e382887eb8 | ||

|

|

cf21cb2b0b | ||

|

|

61e8894c31 | ||

|

|

7a6c3c8129 | ||

|

|

875fec3e1a | ||

|

|

60128c2100 | ||

|

|

ce6d0e3ea7 | ||

|

|

fa85c84af3 | ||

|

|

440884105e | ||

|

|

271f10598f | ||

|

|

cf55a88ce3 | ||

|

|

c1c786b14a | ||

|

|

988fb9d9bf | ||

|

|

d212c81bca | ||

|

|

bc43df2641 | ||

|

|

351b8cb37b | ||

|

|

0d681a2e29 | ||

|

|

5ea027c5de | ||

|

|

5de6112dcd | ||

|

|

4fb51723b7 | ||

|

|

06502d1115 | ||

|

|

3854d6dd00 | ||

|

|

895854913a | ||

|

|

ef753b8857 | ||

|

|

9c16fede73 | ||

|

|

ce11adb5e4 | ||

|

|

894e8b1218 | ||

|

|

2ec7e432dd | ||

|

|

870e8352c1 | ||

|

|

de42f27e03 | ||

|

|

955b8016aa | ||

|

|

d728a3b2d9 | ||

|

|

0c205a71fc | ||

|

|

a8c0199d96 | ||

|

|

032a266ec4 | ||

|

|

40b75fbb9b | ||

|

|

afad55045b | ||

|

|

5f54f06ee5 | ||

|

|

20f56ae1d0 | ||

|

|

73d6fcfccd |

344

.github/copilot-instructions.md

vendored

Normal file

344

.github/copilot-instructions.md

vendored

Normal file

@@ -0,0 +1,344 @@

|

|||||||

|

# GitHub Copilot Instructions for go-zero

|

||||||

|

|

||||||

|

This document provides guidelines for GitHub Copilot when assisting with development in the go-zero project.

|

||||||

|

|

||||||

|

## Project Overview

|

||||||

|

|

||||||

|

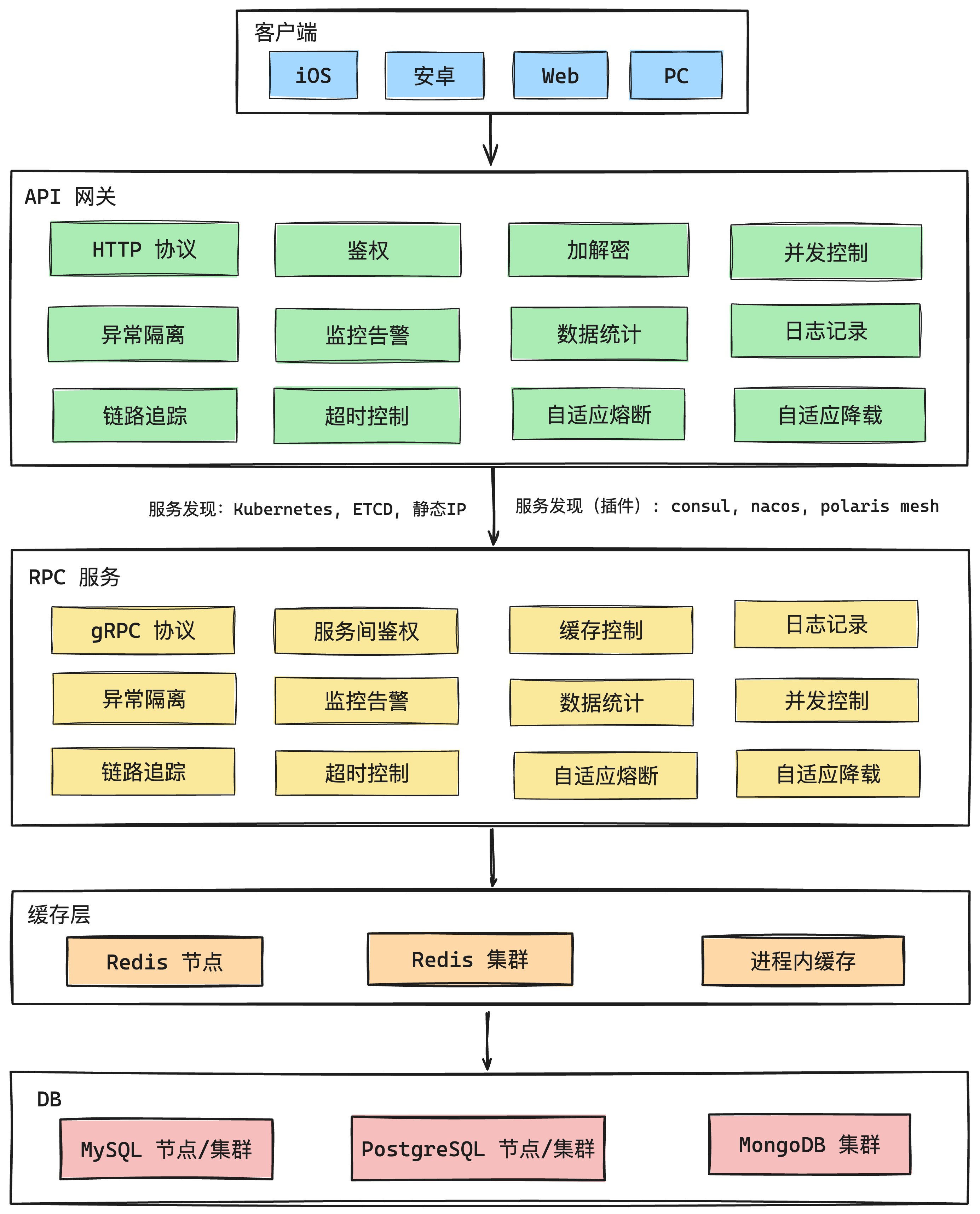

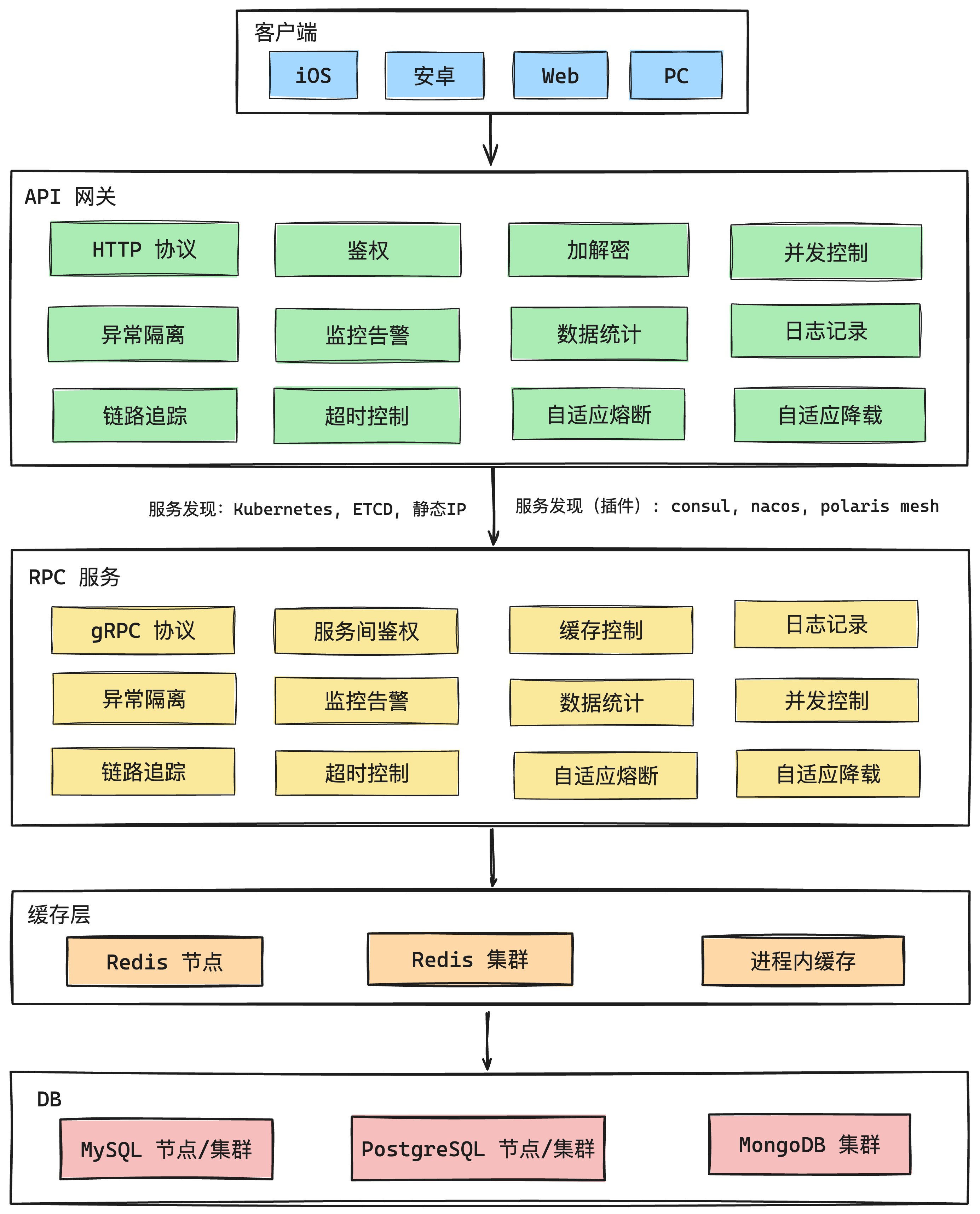

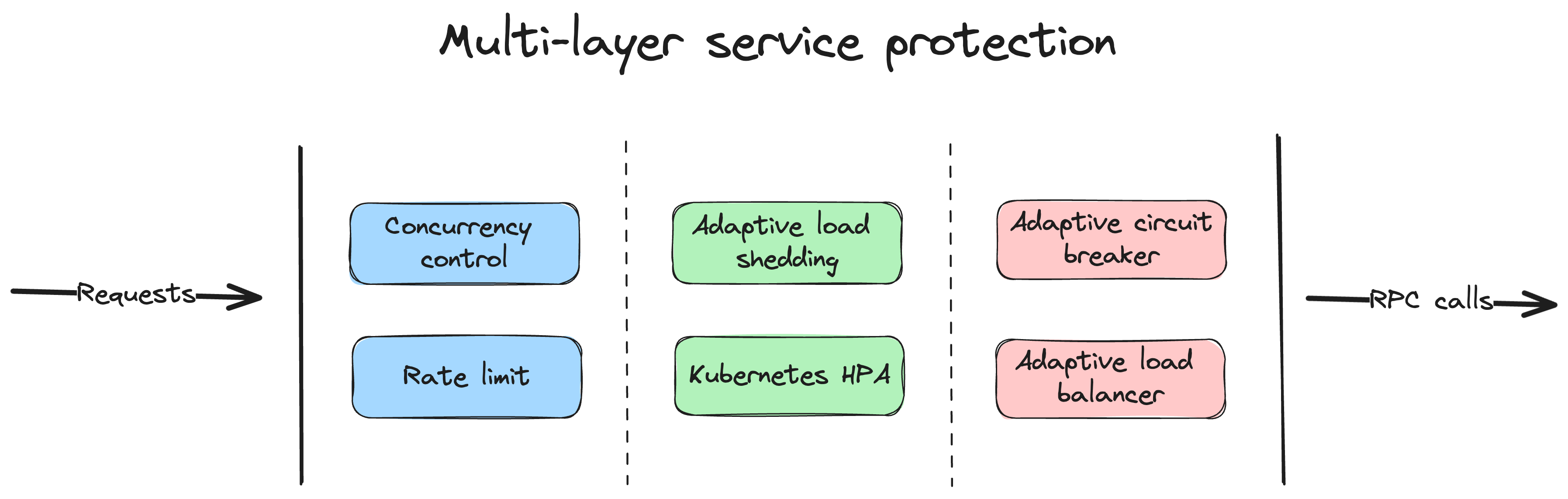

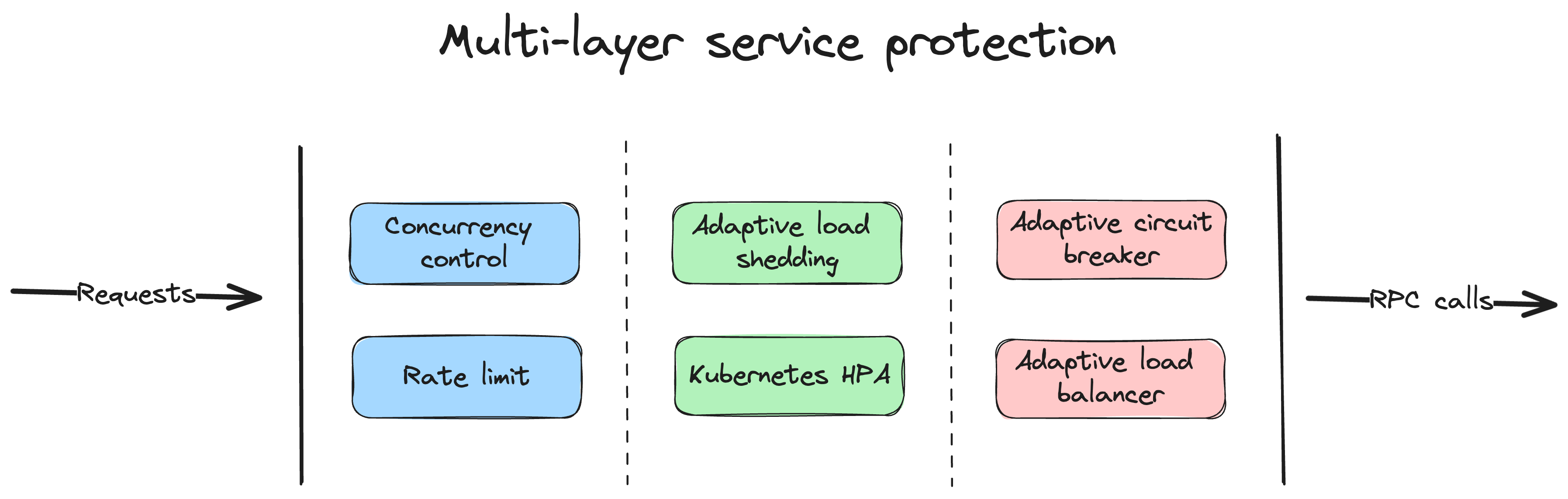

go-zero is a web and RPC framework with lots of built-in engineering practices designed to ensure the stability of busy services with resilience design. It has been serving sites with tens of millions of users for years.

|

||||||

|

|

||||||

|

### Key Architecture Components

|

||||||

|

|

||||||

|

- **REST API framework** (`rest/`) - HTTP service framework with middleware chain support

|

||||||

|

- **RPC framework** (`zrpc/`) - gRPC-based RPC framework with etcd service discovery and p2c_ewma load balancing

|

||||||

|

- **Gateway** (`gateway/`) - API gateway supporting both HTTP and gRPC upstreams with proto-based routing

|

||||||

|

- **MCP Server** (`mcp/`) - Model Context Protocol server for AI agent integration via SSE

|

||||||

|

- **Core utilities** (`core/`) - Production-grade components:

|

||||||

|

- Resilience: circuit breakers (`breaker/`), rate limiters (`limit/`), adaptive load shedding (`load/`)

|

||||||

|

- Storage: SQL with cache (`stores/sqlc/`), Redis (`stores/redis/`), MongoDB (`stores/mongo/`)

|

||||||

|

- Concurrency: MapReduce (`mr/`), worker pools (`executors/`), sync primitives (`syncx/`)

|

||||||

|

- Observability: metrics (`metric/`), tracing (`trace/`), structured logging (`logx/`)

|

||||||

|

- **Code generation tool** (`tools/goctl/`) - CLI tool for generating Go code from `.api` and `.proto` files

|

||||||

|

|

||||||

|

## Coding Standards and Conventions

|

||||||

|

|

||||||

|

### Code Style

|

||||||

|

|

||||||

|

1. **Follow Go conventions**: Use `gofmt` for formatting, follow effective Go practices

|

||||||

|

2. **Package naming**: Use lowercase, single-word package names when possible

|

||||||

|

3. **Error handling**: Always handle errors explicitly, use `errorx.BatchError` for multiple errors

|

||||||

|

4. **Context propagation**: Always pass `context.Context` as the first parameter for functions that may block

|

||||||

|

5. **Configuration structures**: Use struct tags with JSON annotations, defaults, and validation

|

||||||

|

|

||||||

|

**Pattern**: All service configs embed `service.ServiceConf` for common fields (Name, Log, Mode, Telemetry)

|

||||||

|

```go

|

||||||

|

type Config struct {

|

||||||

|

service.ServiceConf // Always embed for services

|

||||||

|

Host string `json:",default=0.0.0.0"`

|

||||||

|

Port int // Required field (no default)

|

||||||

|

Timeout int64 `json:",default=3000"` // Timeouts in milliseconds

|

||||||

|

Optional string `json:",optional"` // Optional field

|

||||||

|

Mode string `json:",default=pro,options=dev|test|rt|pre|pro"` // Validated options

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

**Service modes**: `dev`/`test`/`rt` disable load shedding and stats; `pre`/`pro` enable all resilience features

|

||||||

|

|

||||||

|

### Interface Design

|

||||||

|

|

||||||

|

1. **Small interfaces**: Follow Go's preference for small, focused interfaces

|

||||||

|

2. **Context methods**: Provide both context and non-context versions of methods

|

||||||

|

3. **Options pattern**: Use functional options for complex configuration

|

||||||

|

|

||||||

|

Example:

|

||||||

|

```go

|

||||||

|

func (c *Client) Get(key string, val any) error {

|

||||||

|

return c.GetCtx(context.Background(), key, val)

|

||||||

|

}

|

||||||

|

|

||||||

|

func (c *Client) GetCtx(ctx context.Context, key string, val any) error {

|

||||||

|

// implementation

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

### Testing Patterns

|

||||||

|

|

||||||

|

1. **Test file naming**: Use `*_test.go` suffix

|

||||||

|

2. **Test function naming**: Use `TestFunctionName` pattern

|

||||||

|

3. **Use testify/assert**: Prefer `assert` package for assertions

|

||||||

|

4. **Table-driven tests**: Use table-driven tests for multiple scenarios

|

||||||

|

5. **Mock interfaces**: Use `go.uber.org/mock` for mocking

|

||||||

|

6. **Test helpers**: Use `redistest`, `mongtest` helpers for database testing

|

||||||

|

|

||||||

|

Example test pattern:

|

||||||

|

```go

|

||||||

|

func TestSomething(t *testing.T) {

|

||||||

|

tests := []struct {

|

||||||

|

name string

|

||||||

|

input string

|

||||||

|

expected string

|

||||||

|

wantErr bool

|

||||||

|

}{

|

||||||

|

{"valid case", "input", "output", false},

|

||||||

|

{"error case", "bad", "", true},

|

||||||

|

}

|

||||||

|

|

||||||

|

for _, tt := range tests {

|

||||||

|

t.Run(tt.name, func(t *testing.T) {

|

||||||

|

result, err := SomeFunction(tt.input)

|

||||||

|

if tt.wantErr {

|

||||||

|

assert.Error(t, err)

|

||||||

|

return

|

||||||

|

}

|

||||||

|

assert.NoError(t, err)

|

||||||

|

assert.Equal(t, tt.expected, result)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

## Framework-Specific Guidelines

|

||||||

|

|

||||||

|

### REST API Development

|

||||||

|

|

||||||

|

1. **API Definition**: Use `.api` files to define REST APIs with goctl codegen

|

||||||

|

2. **Handler pattern**: Separate business logic into logic packages (handlers call logic layer)

|

||||||

|

3. **Middleware chain**: Middlewares wrap via `chain.Chain` interface - use `Append()` or `Prepend()` to control order

|

||||||

|

- Built-in middlewares (all in `rest/handler/`): tracing, logging, metrics, recovery, breaker, shedding, timeout, maxconns, maxbytes, gunzip

|

||||||

|

- Custom middleware: `func(http.Handler) http.Handler` - call `next.ServeHTTP(w, r)` to continue chain

|

||||||

|

4. **Response handling**: Use `httpx.WriteJson(w, code, v)` for JSON responses

|

||||||

|

5. **Error handling**: Use `httpx.Error(w, err)` or `httpx.ErrorCtx(ctx, w, err)` for HTTP error responses

|

||||||

|

6. **Route registration**: Routes defined with `Method`, `Path`, and `Handler` - wildcards use `:param` syntax

|

||||||

|

|

||||||

|

### RPC Development

|

||||||

|

|

||||||

|

1. **Protocol Buffers**: Use protobuf for service definitions, generate code with goctl

|

||||||

|

2. **Service discovery**: Use etcd for dynamic service registration/discovery, or direct endpoints for static routing

|

||||||

|

3. **Load balancing**: Default is `p2c_ewma` (power of 2 choices with EWMA), configurable via `BalancerName`

|

||||||

|

4. **Client configuration**: Support `Etcd`, `Endpoints`, or `Target` - use `BuildTarget()` to construct connection string

|

||||||

|

5. **Interceptors**: Implement gRPC interceptors for cross-cutting concerns (auth, logging, metrics)

|

||||||

|

6. **Health checks**: gRPC health checks enabled by default (`Health: true`)

|

||||||

|

|

||||||

|

### Database Operations

|

||||||

|

|

||||||

|

1. **SQL operations**: Use `sqlx.SqlConn` interface - methods always end with `Ctx` for context support

|

||||||

|

2. **Caching pattern**: `stores/sqlc` provides `CachedConn` for automatic cache-aside pattern

|

||||||

|

- `QueryRowCtx`: Query with cache key, auto-populate on cache miss

|

||||||

|

- `ExecCtx`: Execute and delete cache keys

|

||||||

|

3. **Transactions**: Use `sqlx.SqlConn.TransactCtx()` to get transaction session

|

||||||

|

4. **Connection pooling**: Managed automatically (64 max idle/open, 1min lifetime)

|

||||||

|

5. **Test helpers**: Use `redistest.CreateRedis(t)` for Redis, SQL mocks for DB testing

|

||||||

|

|

||||||

|

Example cache pattern:

|

||||||

|

```go

|

||||||

|

err := c.QueryRowCtx(ctx, &dest, key, func(ctx context.Context, conn sqlx.SqlConn) error {

|

||||||

|

return conn.QueryRowCtx(ctx, &dest, query, args...)

|

||||||

|

})

|

||||||

|

```

|

||||||

|

|

||||||

|

### Configuration Management

|

||||||

|

|

||||||

|

1. **YAML configuration**: Use YAML for configuration files

|

||||||

|

2. **Environment variables**: Support environment variable overrides

|

||||||

|

3. **Validation**: Include proper validation for configuration parameters

|

||||||

|

4. **Sensible defaults**: Provide reasonable default values

|

||||||

|

|

||||||

|

## Error Handling Best Practices

|

||||||

|

|

||||||

|

1. **Wrap errors**: Use `fmt.Errorf` with `%w` verb to wrap errors

|

||||||

|

2. **Custom errors**: Define custom error types when needed

|

||||||

|

3. **Error logging**: Log errors appropriately with context

|

||||||

|

4. **Graceful degradation**: Implement fallback mechanisms

|

||||||

|

|

||||||

|

## Performance Considerations

|

||||||

|

|

||||||

|

1. **Resource pools**: Use connection pools and worker pools

|

||||||

|

2. **Circuit breakers**: Implement circuit breaker patterns for external calls

|

||||||

|

3. **Rate limiting**: Apply rate limiting to protect services

|

||||||

|

4. **Load shedding**: Implement adaptive load shedding

|

||||||

|

5. **Metrics**: Add appropriate metrics and monitoring

|

||||||

|

|

||||||

|

## Security Guidelines

|

||||||

|

|

||||||

|

1. **Input validation**: Validate all input parameters

|

||||||

|

2. **SQL injection prevention**: Use parameterized queries

|

||||||

|

3. **Authentication**: Implement proper JWT token handling

|

||||||

|

4. **HTTPS**: Support TLS/HTTPS configurations

|

||||||

|

5. **CORS**: Configure CORS appropriately for web APIs

|

||||||

|

|

||||||

|

## Documentation Standards

|

||||||

|

|

||||||

|

1. **Package documentation**: Include package-level documentation

|

||||||

|

2. **Function documentation**: Document exported functions with examples

|

||||||

|

3. **API documentation**: Maintain API documentation in sync

|

||||||

|

4. **README updates**: Update README for significant changes

|

||||||

|

|

||||||

|

## GitHub Issue Management

|

||||||

|

|

||||||

|

### Understanding and Categorizing Issues

|

||||||

|

|

||||||

|

When analyzing GitHub issues, consider these common categories:

|

||||||

|

|

||||||

|

1. **Bug Reports**: Stack traces, version info, reproduction steps

|

||||||

|

2. **Feature Requests**: Use case, proposed solution, alternatives

|

||||||

|

3. **Questions**: Usage, configuration, or architecture

|

||||||

|

4. **Documentation Issues**: Missing, unclear, or incorrect docs

|

||||||

|

5. **Performance Issues**: Benchmarks, profiling data, resource usage

|

||||||

|

|

||||||

|

### Issue Analysis Checklist

|

||||||

|

|

||||||

|

- Identify affected component (REST, RPC, Gateway, MCP, Core utilities, goctl)

|

||||||

|

- Check versions (go-zero, Go)

|

||||||

|

- Look for reproduction steps or code examples

|

||||||

|

- Review code snippets, logs, or stack traces

|

||||||

|

- Check if related to resilience features (breaker, load shedding, rate limiting)

|

||||||

|

- Determine production impact

|

||||||

|

|

||||||

|

### Responding to Issues

|

||||||

|

|

||||||

|

Be helpful and professional. Ask clarifying questions when needed. Reference relevant documentation and code files. Provide code examples following project conventions. Suggest workarounds when applicable.

|

||||||

|

|

||||||

|

### Chinese to English Translation

|

||||||

|

|

||||||

|

go-zero has an international user base. When encountering issues or comments written in Chinese, translate them to English to ensure all contributors can participate in discussions.

|

||||||

|

|

||||||

|

#### Translation Guidelines

|

||||||

|

|

||||||

|

1. **Update issue titles**: Edit the issue title to include English translation only

|

||||||

|

2. **Translate comments in place**: Add a comment with the English translation, followed by the original Chinese text

|

||||||

|

3. **Keep original Chinese**: After translating, include the original Chinese text in a blockquote for verification

|

||||||

|

4. **Encourage English communication**: Politely suggest users write in English for better collaboration

|

||||||

|

5. **Maintain technical accuracy**: Preserve technical terms, component names, and code exactly

|

||||||

|

6. **Translate naturally**: Avoid literal word-by-word translation; use idiomatic English

|

||||||

|

7. **Preserve formatting**: Keep markdown formatting, code blocks, and links intact

|

||||||

|

8. **Keep URLs unchanged**: Don't translate URLs or file paths

|

||||||

|

|

||||||

|

#### Common Technical Terms (Chinese → English)

|

||||||

|

|

||||||

|

- 框架 → **Framework** | 中间件 → **Middleware** | 负载均衡 → **Load Balancing**

|

||||||

|

- 熔断器 → **Circuit Breaker** | 限流 → **Rate Limiting** | 降载/过载保护 → **Load Shedding**

|

||||||

|

- 服务发现 → **Service Discovery** | 配置 → **Configuration** | 弹性/容错 → **Resilience** | 微服务 → **Microservices**

|

||||||

|

|

||||||

|

#### Translation Example

|

||||||

|

|

||||||

|

**Original Chinese Title:** `goctl 执行环境问题`

|

||||||

|

**Updated Title:** `goctl Execution Environment Issue`

|

||||||

|

|

||||||

|

**Original Chinese Comment:** `我在项目中遇到熔断器配置问题`

|

||||||

|

**Translation in Comment:**

|

||||||

|

```markdown

|

||||||

|

I encountered a circuit breaker configuration issue in my project.

|

||||||

|

|

||||||

|

> Original (原文): 我在项目中遇到熔断器配置问题

|

||||||

|

```

|

||||||

|

|

||||||

|

### Common Issue Patterns and Solutions

|

||||||

|

|

||||||

|

#### Configuration Issues

|

||||||

|

- Check `service.ServiceConf` embedding and struct tags

|

||||||

|

- Verify YAML syntax, defaults, and validation rules

|

||||||

|

- Reference: [rest/config.go](rest/config.go), [zrpc/config.go](zrpc/config.go)

|

||||||

|

|

||||||

|

#### Code Generation (goctl) Issues

|

||||||

|

- Verify `.api` or `.proto` file syntax and goctl version

|

||||||

|

- Reference: `tools/goctl/` directory

|

||||||

|

|

||||||

|

#### RPC Connection Issues

|

||||||

|

- Check etcd configuration, service discovery, and endpoints

|

||||||

|

- Verify load balancing settings (p2c_ewma)

|

||||||

|

|

||||||

|

#### Database/Cache Issues

|

||||||

|

- Verify `sqlx.SqlConn` usage with context

|

||||||

|

- Check cache key generation, invalidation, and connection pools

|

||||||

|

- Use test helpers (`redistest`, `mongtest`)

|

||||||

|

|

||||||

|

#### Performance Issues

|

||||||

|

- Check if load shedding is enabled (mode: `pre`/`pro`)

|

||||||

|

- Review circuit breaker thresholds, rate limiting, and context timeouts

|

||||||

|

|

||||||

|

### Referencing Codebase

|

||||||

|

|

||||||

|

When explaining issues, reference specific files and patterns:

|

||||||

|

- REST API: `rest/`, `rest/handler/`, `rest/httpx/`

|

||||||

|

- RPC: `zrpc/`, `zrpc/internal/`

|

||||||

|

- Core utilities: `core/breaker/`, `core/limit/`, `core/load/`, etc.

|

||||||

|

- Gateway: `gateway/`

|

||||||

|

- MCP: `mcp/`

|

||||||

|

- Code generation: `tools/goctl/`

|

||||||

|

- Examples: `adhoc/` directory contains various examples

|

||||||

|

|

||||||

|

### Encouraging Best Practices

|

||||||

|

|

||||||

|

When responding to issues, gently guide users toward:

|

||||||

|

- Proper error handling with context

|

||||||

|

- Using resilience features (breakers, rate limiters)

|

||||||

|

- Following testing patterns with table-driven tests

|

||||||

|

- Implementing proper resource cleanup

|

||||||

|

- Reading existing documentation in `docs/` and `readme.md`

|

||||||

|

|

||||||

|

## Common Patterns to Follow

|

||||||

|

|

||||||

|

### Service Configuration

|

||||||

|

```go

|

||||||

|

type ServiceConf struct {

|

||||||

|

Name string

|

||||||

|

Log logx.LogConf

|

||||||

|

Mode string `json:",default=pro,options=[dev,test,pre,pro]"`

|

||||||

|

// ... other common fields

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

### Middleware Implementation

|

||||||

|

```go

|

||||||

|

func SomeMiddleware() rest.Middleware {

|

||||||

|

return func(next http.HandlerFunc) http.HandlerFunc {

|

||||||

|

return func(w http.ResponseWriter, r *http.Request) {

|

||||||

|

// Pre-processing

|

||||||

|

next.ServeHTTP(w, r)

|

||||||

|

// Post-processing

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

### Resource Management

|

||||||

|

Always implement proper resource cleanup using defer and context cancellation.

|

||||||

|

|

||||||

|

## Build and Test Commands

|

||||||

|

|

||||||

|

- Build: `go build ./...`

|

||||||

|

- Test: `go test ./...`

|

||||||

|

- Test with race detection: `go test -race ./...`

|

||||||

|

- Format: `gofmt -w .`

|

||||||

|

- Code generation:

|

||||||

|

- REST API: `goctl api go -api *.api -dir .`

|

||||||

|

- RPC: `goctl rpc protoc *.proto --go_out=. --go-grpc_out=. --zrpc_out=.`

|

||||||

|

- Model from SQL: `goctl model mysql datasource -url="user:pass@tcp(host:port)/db" -table="*" -dir="./model"`

|

||||||

|

|

||||||

|

## Critical Architecture Patterns

|

||||||

|

|

||||||

|

### Resilience Design Philosophy

|

||||||

|

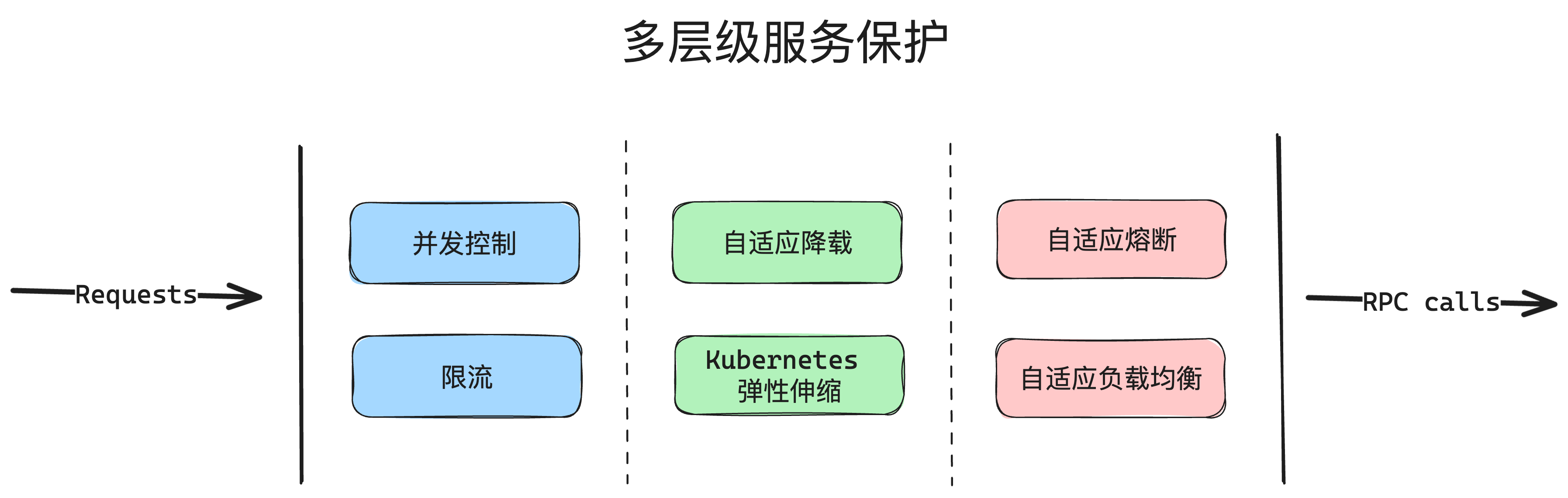

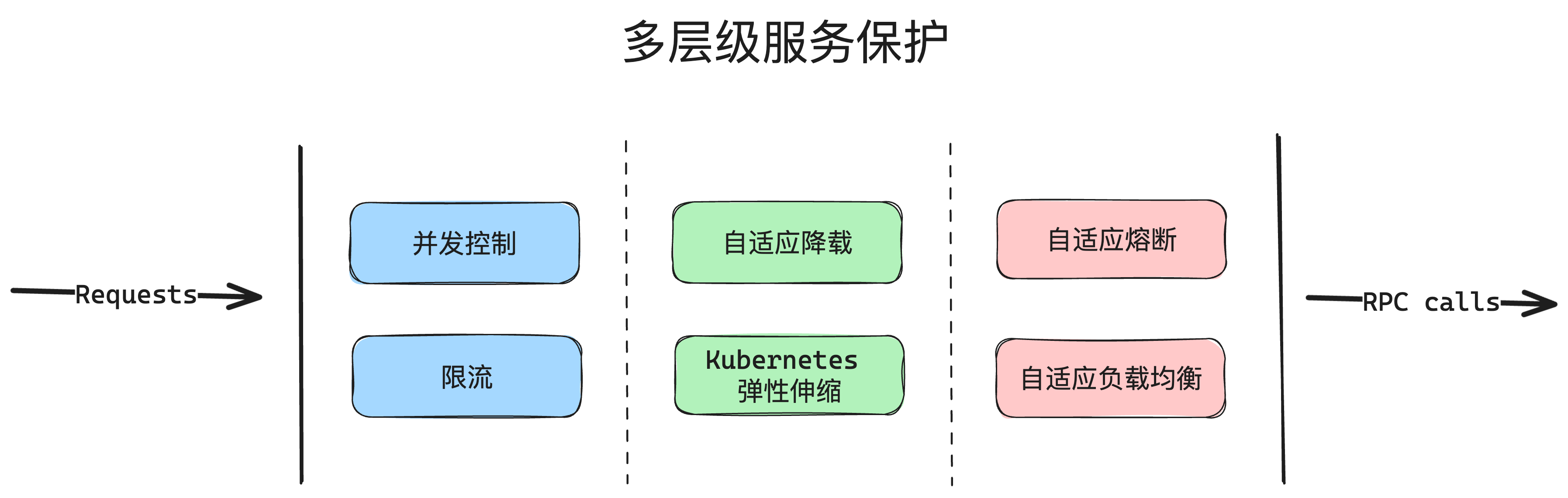

go-zero implements defense-in-depth with multiple protection layers:

|

||||||

|

1. **Circuit Breaker** (`core/breaker`): Google SRE breaker - tracks success/failure, opens on error threshold

|

||||||

|

2. **Adaptive Load Shedding** (`core/load`): CPU-based auto-rejection when system overloaded (disabled in dev/test/rt modes)

|

||||||

|

3. **Rate Limiting** (`core/limit`): Token bucket (Redis-based) and period limiters

|

||||||

|

4. **Timeout Control**: Cascading timeouts via context - set at multiple levels (client, server, handler)

|

||||||

|

|

||||||

|

### Middleware Chain Architecture

|

||||||

|

`rest/chain` provides middleware composition:

|

||||||

|

```go

|

||||||

|

// Middleware signature

|

||||||

|

type Middleware func(http.Handler) http.Handler

|

||||||

|

|

||||||

|

// Chain operations

|

||||||

|

chain := chain.New(m1, m2)

|

||||||

|

chain.Append(m3) // Adds to end: m1 -> m2 -> m3

|

||||||

|

chain.Prepend(m0) // Adds to start: m0 -> m1 -> m2 -> m3

|

||||||

|

handler := chain.Then(finalHandler)

|

||||||

|

```

|

||||||

|

|

||||||

|

### Concurrency Patterns

|

||||||

|

- **MapReduce** (`core/mr`): Parallel processing with worker pools - use for batch operations

|

||||||

|

- **Executors** (`core/executors`): Bulk/period executors for batching operations

|

||||||

|

- **SingleFlight** (`core/syncx`): Deduplicates concurrent identical requests

|

||||||

|

|

||||||

|

Remember to run tests and ensure all checks pass before submitting changes. The project emphasizes high quality, performance, and reliability, so these should be primary considerations in all development work.

|

||||||

8

.github/workflows/codeql-analysis.yml

vendored

8

.github/workflows/codeql-analysis.yml

vendored

@@ -35,11 +35,11 @@ jobs:

|

|||||||

|

|

||||||

steps:

|

steps:

|

||||||

- name: Checkout repository

|

- name: Checkout repository

|

||||||

uses: actions/checkout@v5

|

uses: actions/checkout@v6

|

||||||

|

|

||||||

# Initializes the CodeQL tools for scanning.

|

# Initializes the CodeQL tools for scanning.

|

||||||

- name: Initialize CodeQL

|

- name: Initialize CodeQL

|

||||||

uses: github/codeql-action/init@v3

|

uses: github/codeql-action/init@v4

|

||||||

with:

|

with:

|

||||||

languages: ${{ matrix.language }}

|

languages: ${{ matrix.language }}

|

||||||

# If you wish to specify custom queries, you can do so here or in a config file.

|

# If you wish to specify custom queries, you can do so here or in a config file.

|

||||||

@@ -50,7 +50,7 @@ jobs:

|

|||||||

# Autobuild attempts to build any compiled languages (C/C++, C#, or Java).

|

# Autobuild attempts to build any compiled languages (C/C++, C#, or Java).

|

||||||

# If this step fails, then you should remove it and run the build manually (see below)

|

# If this step fails, then you should remove it and run the build manually (see below)

|

||||||

- name: Autobuild

|

- name: Autobuild

|

||||||

uses: github/codeql-action/autobuild@v3

|

uses: github/codeql-action/autobuild@v4

|

||||||

|

|

||||||

# ℹ️ Command-line programs to run using the OS shell.

|

# ℹ️ Command-line programs to run using the OS shell.

|

||||||

# 📚 https://git.io/JvXDl

|

# 📚 https://git.io/JvXDl

|

||||||

@@ -64,4 +64,4 @@ jobs:

|

|||||||

# make release

|

# make release

|

||||||

|

|

||||||

- name: Perform CodeQL Analysis

|

- name: Perform CodeQL Analysis

|

||||||

uses: github/codeql-action/analyze@v3

|

uses: github/codeql-action/analyze@v4

|

||||||

|

|||||||

10

.github/workflows/go.yml

vendored

10

.github/workflows/go.yml

vendored

@@ -12,10 +12,10 @@ jobs:

|

|||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

steps:

|

steps:

|

||||||

- name: Check out code into the Go module directory

|

- name: Check out code into the Go module directory

|

||||||

uses: actions/checkout@v5

|

uses: actions/checkout@v6

|

||||||

|

|

||||||

- name: Set up Go 1.x

|

- name: Set up Go 1.x

|

||||||

uses: actions/setup-go@v5

|

uses: actions/setup-go@v6

|

||||||

with:

|

with:

|

||||||

go-version-file: go.mod

|

go-version-file: go.mod

|

||||||

check-latest: true

|

check-latest: true

|

||||||

@@ -40,7 +40,7 @@ jobs:

|

|||||||

run: go test -race -coverprofile=coverage.txt -covermode=atomic ./...

|

run: go test -race -coverprofile=coverage.txt -covermode=atomic ./...

|

||||||

|

|

||||||

- name: Codecov

|

- name: Codecov

|

||||||

uses: codecov/codecov-action@v5

|

uses: codecov/codecov-action@v6

|

||||||

with:

|

with:

|

||||||

files: ./coverage.txt

|

files: ./coverage.txt

|

||||||

flags: unittests

|

flags: unittests

|

||||||

@@ -52,10 +52,10 @@ jobs:

|

|||||||

runs-on: windows-latest

|

runs-on: windows-latest

|

||||||

steps:

|

steps:

|

||||||

- name: Checkout codebase

|

- name: Checkout codebase

|

||||||

uses: actions/checkout@v5

|

uses: actions/checkout@v6

|

||||||

|

|

||||||

- name: Set up Go 1.x

|

- name: Set up Go 1.x

|

||||||

uses: actions/setup-go@v5

|

uses: actions/setup-go@v6

|

||||||

with:

|

with:

|

||||||

# make sure Go version compatible with go-zero

|

# make sure Go version compatible with go-zero

|

||||||

go-version-file: go.mod

|

go-version-file: go.mod

|

||||||

|

|||||||

2

.github/workflows/issues.yml

vendored

2

.github/workflows/issues.yml

vendored

@@ -7,7 +7,7 @@ jobs:

|

|||||||

close-issues:

|

close-issues:

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/stale@v9

|

- uses: actions/stale@v10

|

||||||

with:

|

with:

|

||||||

days-before-issue-stale: 365

|

days-before-issue-stale: 365

|

||||||

days-before-issue-close: 90

|

days-before-issue-close: 90

|

||||||

|

|||||||

2

.github/workflows/release.yaml

vendored

2

.github/workflows/release.yaml

vendored

@@ -16,7 +16,7 @@ jobs:

|

|||||||

- goarch: "386"

|

- goarch: "386"

|

||||||

goos: darwin

|

goos: darwin

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v5

|

- uses: actions/checkout@v6

|

||||||

- uses: zeromicro/go-zero-release-action@master

|

- uses: zeromicro/go-zero-release-action@master

|

||||||

with:

|

with:

|

||||||

github_token: ${{ secrets.GITHUB_TOKEN }}

|

github_token: ${{ secrets.GITHUB_TOKEN }}

|

||||||

|

|||||||

7

.github/workflows/reviewdog.yml

vendored

7

.github/workflows/reviewdog.yml

vendored

@@ -5,7 +5,12 @@ jobs:

|

|||||||

name: runner / staticcheck

|

name: runner / staticcheck

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v5

|

- uses: actions/checkout@v6

|

||||||

|

- uses: actions/setup-go@v6

|

||||||

|

with:

|

||||||

|

go-version-file: go.mod

|

||||||

|

check-latest: true

|

||||||

|

cache: true

|

||||||

- uses: reviewdog/action-staticcheck@v1

|

- uses: reviewdog/action-staticcheck@v1

|

||||||

with:

|

with:

|

||||||

github_token: ${{ secrets.github_token }}

|

github_token: ${{ secrets.github_token }}

|

||||||

|

|||||||

4

.github/workflows/version-check.yml

vendored

4

.github/workflows/version-check.yml

vendored

@@ -10,10 +10,10 @@ jobs:

|

|||||||

version-check:

|

version-check:

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v5

|

- uses: actions/checkout@v6

|

||||||

|

|

||||||

- name: Set up Go

|

- name: Set up Go

|

||||||

uses: actions/setup-go@v5

|

uses: actions/setup-go@v6

|

||||||

with:

|

with:

|

||||||

go-version: '1.21'

|

go-version: '1.21'

|

||||||

|

|

||||||

|

|||||||

1

.gitignore

vendored

1

.gitignore

vendored

@@ -17,6 +17,7 @@

|

|||||||

**/logs

|

**/logs

|

||||||

**/adhoc

|

**/adhoc

|

||||||

**/coverage.txt

|

**/coverage.txt

|

||||||

|

**/WARP.md

|

||||||

|

|

||||||

# for test purpose

|

# for test purpose

|

||||||

go.work

|

go.work

|

||||||

|

|||||||

@@ -40,7 +40,7 @@ type (

|

|||||||

}

|

}

|

||||||

)

|

)

|

||||||

|

|

||||||

// New create a Filter, store is the backed redis, key is the key for the bloom filter,

|

// New creates a Filter, store is the backed redis, key is the key for the bloom filter,

|

||||||

// bits is how many bits will be used, maps is how many hashes for each addition.

|

// bits is how many bits will be used, maps is how many hashes for each addition.

|

||||||

// best practices:

|

// best practices:

|

||||||

// elements - means how many actual elements

|

// elements - means how many actual elements

|

||||||

|

|||||||

@@ -6,8 +6,6 @@ import (

|

|||||||

"crypto/cipher"

|

"crypto/cipher"

|

||||||

"encoding/base64"

|

"encoding/base64"

|

||||||

"errors"

|

"errors"

|

||||||

|

|

||||||

"github.com/zeromicro/go-zero/core/logx"

|

|

||||||

)

|

)

|

||||||

|

|

||||||

// ErrPaddingSize indicates bad padding size.

|

// ErrPaddingSize indicates bad padding size.

|

||||||

@@ -27,7 +25,8 @@ func newECB(b cipher.Block) *ecb {

|

|||||||

|

|

||||||

type ecbEncrypter ecb

|

type ecbEncrypter ecb

|

||||||

|

|

||||||

// NewECBEncrypter returns an ECB encrypter.

|

// Deprecated: NewECBEncrypter returns an ECB encrypter.

|

||||||

|

// ECB mode is insecure for multi-block data. Use AES-GCM instead.

|

||||||

func NewECBEncrypter(b cipher.Block) cipher.BlockMode {

|

func NewECBEncrypter(b cipher.Block) cipher.BlockMode {

|

||||||

return (*ecbEncrypter)(newECB(b))

|

return (*ecbEncrypter)(newECB(b))

|

||||||

}

|

}

|

||||||

@@ -39,12 +38,10 @@ func (x *ecbEncrypter) BlockSize() int { return x.blockSize }

|

|||||||

// the block size. Dst and src must overlap entirely or not at all.

|

// the block size. Dst and src must overlap entirely or not at all.

|

||||||

func (x *ecbEncrypter) CryptBlocks(dst, src []byte) {

|

func (x *ecbEncrypter) CryptBlocks(dst, src []byte) {

|

||||||

if len(src)%x.blockSize != 0 {

|

if len(src)%x.blockSize != 0 {

|

||||||

logx.Error("crypto/cipher: input not full blocks")

|

panic("crypto/cipher: input not full blocks")

|

||||||

return

|

|

||||||

}

|

}

|

||||||

if len(dst) < len(src) {

|

if len(dst) < len(src) {

|

||||||

logx.Error("crypto/cipher: output smaller than input")

|

panic("crypto/cipher: output smaller than input")

|

||||||

return

|

|

||||||

}

|

}

|

||||||

|

|

||||||

for len(src) > 0 {

|

for len(src) > 0 {

|

||||||

@@ -56,7 +53,8 @@ func (x *ecbEncrypter) CryptBlocks(dst, src []byte) {

|

|||||||

|

|

||||||

type ecbDecrypter ecb

|

type ecbDecrypter ecb

|

||||||

|

|

||||||

// NewECBDecrypter returns an ECB decrypter.

|

// Deprecated: NewECBDecrypter returns an ECB decrypter.

|

||||||

|

// ECB mode is insecure for multi-block data. Use AES-GCM instead.

|

||||||

func NewECBDecrypter(b cipher.Block) cipher.BlockMode {

|

func NewECBDecrypter(b cipher.Block) cipher.BlockMode {

|

||||||

return (*ecbDecrypter)(newECB(b))

|

return (*ecbDecrypter)(newECB(b))

|

||||||

}

|

}

|

||||||

@@ -70,12 +68,10 @@ func (x *ecbDecrypter) BlockSize() int {

|

|||||||

// the block size. Dst and src must overlap entirely or not at all.

|

// the block size. Dst and src must overlap entirely or not at all.

|

||||||

func (x *ecbDecrypter) CryptBlocks(dst, src []byte) {

|

func (x *ecbDecrypter) CryptBlocks(dst, src []byte) {

|

||||||

if len(src)%x.blockSize != 0 {

|

if len(src)%x.blockSize != 0 {

|

||||||

logx.Error("crypto/cipher: input not full blocks")

|

panic("crypto/cipher: input not full blocks")

|

||||||

return

|

|

||||||

}

|

}

|

||||||

if len(dst) < len(src) {

|

if len(dst) < len(src) {

|

||||||

logx.Error("crypto/cipher: output smaller than input")

|

panic("crypto/cipher: output smaller than input")

|

||||||

return

|

|

||||||

}

|

}

|

||||||

|

|

||||||

for len(src) > 0 {

|

for len(src) > 0 {

|

||||||

@@ -85,14 +81,18 @@ func (x *ecbDecrypter) CryptBlocks(dst, src []byte) {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

// EcbDecrypt decrypts src with the given key.

|

// Deprecated: EcbDecrypt decrypts src with the given key.

|

||||||

|

// ECB mode is insecure for multi-block data. Use AES-GCM instead.

|

||||||

func EcbDecrypt(key, src []byte) ([]byte, error) {

|

func EcbDecrypt(key, src []byte) ([]byte, error) {

|

||||||

block, err := aes.NewCipher(key)

|

block, err := aes.NewCipher(key)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

logx.Errorf("Decrypt key error: % x", key)

|

|

||||||

return nil, err

|

return nil, err

|

||||||

}

|

}

|

||||||

|

|

||||||

|

if len(src)%block.BlockSize() != 0 {

|

||||||

|

return nil, ErrPaddingSize

|

||||||

|

}

|

||||||

|

|

||||||

decrypter := NewECBDecrypter(block)

|

decrypter := NewECBDecrypter(block)

|

||||||

decrypted := make([]byte, len(src))

|

decrypted := make([]byte, len(src))

|

||||||

decrypter.CryptBlocks(decrypted, src)

|

decrypter.CryptBlocks(decrypted, src)

|

||||||

@@ -100,8 +100,9 @@ func EcbDecrypt(key, src []byte) ([]byte, error) {

|

|||||||

return pkcs5Unpadding(decrypted, decrypter.BlockSize())

|

return pkcs5Unpadding(decrypted, decrypter.BlockSize())

|

||||||

}

|

}

|

||||||

|

|

||||||

// EcbDecryptBase64 decrypts base64 encoded src with the given base64 encoded key.

|

// Deprecated: EcbDecryptBase64 decrypts base64 encoded src with the given base64 encoded key.

|

||||||

// The returned string is also base64 encoded.

|

// The returned string is also base64 encoded.

|

||||||

|

// ECB mode is insecure for multi-block data. Use AES-GCM instead.

|

||||||

func EcbDecryptBase64(key, src string) (string, error) {

|

func EcbDecryptBase64(key, src string) (string, error) {

|

||||||

keyBytes, err := getKeyBytes(key)

|

keyBytes, err := getKeyBytes(key)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

@@ -121,11 +122,11 @@ func EcbDecryptBase64(key, src string) (string, error) {

|

|||||||

return base64.StdEncoding.EncodeToString(decryptedBytes), nil

|

return base64.StdEncoding.EncodeToString(decryptedBytes), nil

|

||||||

}

|

}

|

||||||

|

|

||||||

// EcbEncrypt encrypts src with the given key.

|

// Deprecated: EcbEncrypt encrypts src with the given key.

|

||||||

|

// ECB mode is insecure for multi-block data. Use AES-GCM instead.

|

||||||

func EcbEncrypt(key, src []byte) ([]byte, error) {

|

func EcbEncrypt(key, src []byte) ([]byte, error) {

|

||||||

block, err := aes.NewCipher(key)

|

block, err := aes.NewCipher(key)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

logx.Errorf("Encrypt key error: % x", key)

|

|

||||||

return nil, err

|

return nil, err

|

||||||

}

|

}

|

||||||

|

|

||||||

@@ -137,8 +138,9 @@ func EcbEncrypt(key, src []byte) ([]byte, error) {

|

|||||||

return crypted, nil

|

return crypted, nil

|

||||||

}

|

}

|

||||||

|

|

||||||

// EcbEncryptBase64 encrypts base64 encoded src with the given base64 encoded key.

|

// Deprecated: EcbEncryptBase64 encrypts base64 encoded src with the given base64 encoded key.

|

||||||

// The returned string is also base64 encoded.

|

// The returned string is also base64 encoded.

|

||||||

|

// ECB mode is insecure for multi-block data. Use AES-GCM instead.

|

||||||

func EcbEncryptBase64(key, src string) (string, error) {

|

func EcbEncryptBase64(key, src string) (string, error) {

|

||||||

keyBytes, err := getKeyBytes(key)

|

keyBytes, err := getKeyBytes(key)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

@@ -179,10 +181,20 @@ func pkcs5Padding(ciphertext []byte, blockSize int) []byte {

|

|||||||

|

|

||||||

func pkcs5Unpadding(src []byte, blockSize int) ([]byte, error) {

|

func pkcs5Unpadding(src []byte, blockSize int) ([]byte, error) {

|

||||||

length := len(src)

|

length := len(src)

|

||||||

unpadding := int(src[length-1])

|

if length == 0 {

|

||||||

if unpadding >= length || unpadding > blockSize {

|

|

||||||

return nil, ErrPaddingSize

|

return nil, ErrPaddingSize

|

||||||

}

|

}

|

||||||

|

|

||||||

|

unpadding := int(src[length-1])

|

||||||

|

if unpadding < 1 || unpadding > blockSize || unpadding > length {

|

||||||

|

return nil, ErrPaddingSize

|

||||||

|

}

|

||||||

|

|

||||||

|

for _, b := range src[length-unpadding:] {

|

||||||

|

if int(b) != unpadding {

|

||||||

|

return nil, ErrPaddingSize

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

return src[:length-unpadding], nil

|

return src[:length-unpadding], nil

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -28,8 +28,8 @@ func TestAesEcb(t *testing.T) {

|

|||||||

_, err = EcbDecrypt(badKey2, dst)

|

_, err = EcbDecrypt(badKey2, dst)

|

||||||

assert.NotNil(t, err)

|

assert.NotNil(t, err)

|

||||||

_, err = EcbDecrypt(key, val)

|

_, err = EcbDecrypt(key, val)

|

||||||

// not enough block, just nil

|

// not a multiple of block size

|

||||||

assert.Nil(t, err)

|

assert.NotNil(t, err)

|

||||||

src, err := EcbDecrypt(key, dst)

|

src, err := EcbDecrypt(key, dst)

|

||||||

assert.Nil(t, err)

|

assert.Nil(t, err)

|

||||||

assert.Equal(t, val, src)

|

assert.Equal(t, val, src)

|

||||||

@@ -41,33 +41,28 @@ func TestAesEcb(t *testing.T) {

|

|||||||

assert.Equal(t, 16, decrypter.BlockSize())

|

assert.Equal(t, 16, decrypter.BlockSize())

|

||||||

|

|

||||||

dst = make([]byte, 8)

|

dst = make([]byte, 8)

|

||||||

encrypter.CryptBlocks(dst, val)

|

assert.Panics(t, func() {

|

||||||

for _, b := range dst {

|

encrypter.CryptBlocks(dst, val)

|

||||||

assert.Equal(t, byte(0), b)

|

})

|

||||||

}

|

|

||||||

|

|

||||||

dst = make([]byte, 8)

|

dst = make([]byte, 8)

|

||||||

encrypter.CryptBlocks(dst, valLong)

|

assert.Panics(t, func() {

|

||||||

for _, b := range dst {

|

encrypter.CryptBlocks(dst, valLong)

|

||||||

assert.Equal(t, byte(0), b)

|

})

|

||||||

}

|

|

||||||

|

|

||||||

dst = make([]byte, 8)

|

dst = make([]byte, 8)

|

||||||

decrypter.CryptBlocks(dst, val)

|

assert.Panics(t, func() {

|

||||||

for _, b := range dst {

|

decrypter.CryptBlocks(dst, val)

|

||||||

assert.Equal(t, byte(0), b)

|

})

|

||||||

}

|

|

||||||

|

|

||||||

dst = make([]byte, 8)

|

dst = make([]byte, 8)

|

||||||

decrypter.CryptBlocks(dst, valLong)

|

assert.Panics(t, func() {

|

||||||

for _, b := range dst {

|

decrypter.CryptBlocks(dst, valLong)

|

||||||

assert.Equal(t, byte(0), b)

|

})

|

||||||

}

|

|

||||||

|

|

||||||

_, err = EcbEncryptBase64("cTR0N3dDKkYtSmFOZFJnVWpYbjJyNXU4eC9BP0QK", "aGVsbG93b3JsZGxvbmcuLgo=")

|

_, err = EcbEncryptBase64("cTR0N3dDKkYtSmFOZFJnVWpYbjJyNXU4eC9BP0QK", "aGVsbG93b3JsZGxvbmcuLgo=")

|

||||||

assert.Error(t, err)

|

assert.Error(t, err)

|

||||||

}

|

}

|

||||||

|

|

||||||

func TestAesEcbBase64(t *testing.T) {

|

func TestAesEcbBase64(t *testing.T) {

|

||||||

const (

|

const (

|

||||||

val = "hello"

|

val = "hello"

|

||||||

@@ -98,3 +93,44 @@ func TestAesEcbBase64(t *testing.T) {

|

|||||||

assert.Nil(t, err)

|

assert.Nil(t, err)

|

||||||

assert.Equal(t, val, string(b))

|

assert.Equal(t, val, string(b))

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func TestPkcs5UnpaddingEmptyInput(t *testing.T) {

|

||||||

|

_, err := pkcs5Unpadding([]byte{}, 16)

|

||||||

|

assert.Equal(t, ErrPaddingSize, err)

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestPkcs5UnpaddingMalformedPadding(t *testing.T) {

|

||||||

|

// Valid PKCS5 padding of 3: last 3 bytes should all be 0x03

|

||||||

|

// Here we corrupt one padding byte

|

||||||

|

malformed := []byte{0x41, 0x41, 0x41, 0x41, 0x41, 0x41, 0x41, 0x41,

|

||||||

|

0x41, 0x41, 0x41, 0x41, 0x41, 0x02, 0x03, 0x03}

|

||||||

|

_, err := pkcs5Unpadding(malformed, 16)

|

||||||

|

assert.Equal(t, ErrPaddingSize, err)

|

||||||

|

|

||||||

|

// All padding bytes correct

|

||||||

|

valid := []byte{0x41, 0x41, 0x41, 0x41, 0x41, 0x41, 0x41, 0x41,

|

||||||

|

0x41, 0x41, 0x41, 0x41, 0x41, 0x03, 0x03, 0x03}

|

||||||

|

result, err := pkcs5Unpadding(valid, 16)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

assert.Equal(t, valid[:13], result)

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestPkcs5UnpaddingInvalidPaddingValue(t *testing.T) {

|

||||||

|

// padding value = 0 (< 1)

|

||||||

|

_, err := pkcs5Unpadding([]byte{0x41, 0x00}, 16)

|

||||||

|

assert.Equal(t, ErrPaddingSize, err)

|

||||||

|

|

||||||

|

// padding value > blockSize

|

||||||

|

_, err = pkcs5Unpadding([]byte{0x41, 0x41, 0x41, 0x41, 17}, 4)

|

||||||

|

assert.Equal(t, ErrPaddingSize, err)

|

||||||

|

|

||||||

|

// padding value > length

|

||||||

|

_, err = pkcs5Unpadding([]byte{0x41, 0x03}, 16)

|

||||||

|

assert.Equal(t, ErrPaddingSize, err)

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestEcbDecryptEmptyInput(t *testing.T) {

|

||||||

|

key := []byte("q4t7w!z%C*F-JaNdRgUjXn2r5u8x/A?D")

|

||||||

|

_, err := EcbDecrypt(key, []byte{})

|

||||||

|

assert.Equal(t, ErrPaddingSize, err)

|

||||||

|

}

|

||||||

|

|||||||

@@ -35,7 +35,7 @@ func ComputeKey(pubKey, priKey *big.Int) (*big.Int, error) {

|

|||||||

return nil, ErrInvalidPubKey

|

return nil, ErrInvalidPubKey

|

||||||

}

|

}

|

||||||

|

|

||||||

if pubKey.Sign() <= 0 && p.Cmp(pubKey) <= 0 {

|

if pubKey.Sign() <= 0 || p.Cmp(pubKey) <= 0 {

|

||||||

return nil, ErrPubKeyOutOfBound

|

return nil, ErrPubKeyOutOfBound

|

||||||

}

|

}

|

||||||

|

|

||||||

|

|||||||

@@ -94,3 +94,32 @@ func TestDHOnErrors(t *testing.T) {

|

|||||||

|

|

||||||

assert.NotNil(t, NewPublicKey([]byte("")))

|

assert.NotNil(t, NewPublicKey([]byte("")))

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func TestDHPubKeyBoundary(t *testing.T) {

|

||||||

|

key, err := GenerateKey()

|

||||||

|

assert.Nil(t, err)

|

||||||

|

|

||||||

|

// pubKey = 0 should be rejected

|

||||||

|

_, err = ComputeKey(big.NewInt(0), key.PriKey)

|

||||||

|

assert.ErrorIs(t, err, ErrPubKeyOutOfBound)

|

||||||

|

|

||||||

|

// pubKey = -1 should be rejected

|

||||||

|

_, err = ComputeKey(big.NewInt(-1), key.PriKey)

|

||||||

|

assert.ErrorIs(t, err, ErrPubKeyOutOfBound)

|

||||||

|

|

||||||

|

// pubKey = p should be rejected

|

||||||

|

_, err = ComputeKey(new(big.Int).Set(p), key.PriKey)

|

||||||

|

assert.ErrorIs(t, err, ErrPubKeyOutOfBound)

|

||||||

|

|

||||||

|

// pubKey = p+1 should be rejected

|

||||||

|

_, err = ComputeKey(new(big.Int).Add(p, big.NewInt(1)), key.PriKey)

|

||||||

|

assert.ErrorIs(t, err, ErrPubKeyOutOfBound)

|

||||||

|

|

||||||

|

// pubKey = 1 should be accepted

|

||||||

|

_, err = ComputeKey(big.NewInt(1), key.PriKey)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

|

||||||

|

// pubKey = p-1 should be accepted

|

||||||

|

_, err = ComputeKey(new(big.Int).Sub(p, big.NewInt(1)), key.PriKey)

|

||||||

|

assert.NoError(t, err)

|

||||||

|

}

|

||||||

|

|||||||

@@ -3,6 +3,7 @@ package codec

|

|||||||

import (

|

import (

|

||||||

"crypto/rand"

|

"crypto/rand"

|

||||||

"crypto/rsa"

|

"crypto/rsa"

|

||||||

|

"crypto/sha256"

|

||||||

"crypto/x509"

|

"crypto/x509"

|

||||||

"encoding/base64"

|

"encoding/base64"

|

||||||

"encoding/pem"

|

"encoding/pem"

|

||||||

@@ -46,7 +47,9 @@ type (

|

|||||||

}

|

}

|

||||||

)

|

)

|

||||||

|

|

||||||

// NewRsaDecrypter returns a RsaDecrypter with the given file.

|

// Deprecated: NewRsaDecrypter returns a RsaDecrypter with the given file.

|

||||||

|

// PKCS#1 v1.5 padding is vulnerable to padding oracle attacks.

|

||||||

|

// Use NewRsaOAEPDecrypter instead.

|

||||||

func NewRsaDecrypter(file string) (RsaDecrypter, error) {

|

func NewRsaDecrypter(file string) (RsaDecrypter, error) {

|

||||||

content, err := os.ReadFile(file)

|

content, err := os.ReadFile(file)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

@@ -90,7 +93,9 @@ func (r *rsaDecrypter) DecryptBase64(input string) ([]byte, error) {

|

|||||||

return r.Decrypt(base64Decoded)

|

return r.Decrypt(base64Decoded)

|

||||||

}

|

}

|

||||||

|

|

||||||

// NewRsaEncrypter returns a RsaEncrypter with the given key.

|

// Deprecated: NewRsaEncrypter returns a RsaEncrypter with the given key.

|

||||||

|

// PKCS#1 v1.5 padding is vulnerable to padding oracle attacks.

|

||||||

|

// Use NewRsaOAEPEncrypter instead.

|

||||||

func NewRsaEncrypter(key []byte) (RsaEncrypter, error) {

|

func NewRsaEncrypter(key []byte) (RsaEncrypter, error) {

|

||||||

block, _ := pem.Decode(key)

|

block, _ := pem.Decode(key)

|

||||||

if block == nil {

|

if block == nil {

|

||||||

@@ -154,3 +159,90 @@ func rsaDecryptBlock(privateKey *rsa.PrivateKey, block []byte) ([]byte, error) {

|

|||||||

func rsaEncryptBlock(publicKey *rsa.PublicKey, msg []byte) ([]byte, error) {

|

func rsaEncryptBlock(publicKey *rsa.PublicKey, msg []byte) ([]byte, error) {

|

||||||

return rsa.EncryptPKCS1v15(rand.Reader, publicKey, msg)

|

return rsa.EncryptPKCS1v15(rand.Reader, publicKey, msg)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

// NewRsaOAEPDecrypter returns a RsaDecrypter using OAEP with SHA-256.

|

||||||

|

func NewRsaOAEPDecrypter(file string) (RsaDecrypter, error) {

|

||||||

|

content, err := os.ReadFile(file)

|

||||||

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

|

||||||

|

block, _ := pem.Decode(content)

|

||||||

|

if block == nil {

|

||||||

|

return nil, ErrPrivateKey

|

||||||

|

}

|

||||||

|

|

||||||

|

privateKey, err := x509.ParsePKCS1PrivateKey(block.Bytes)

|

||||||

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

|

||||||

|

return &rsaOAEPDecrypter{

|

||||||

|

rsaBase: rsaBase{

|

||||||

|

bytesLimit: privateKey.N.BitLen() >> 3,

|

||||||

|

},

|

||||||

|

privateKey: privateKey,

|

||||||

|

}, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

// NewRsaOAEPEncrypter returns a RsaEncrypter using OAEP with SHA-256.

|

||||||

|

func NewRsaOAEPEncrypter(key []byte) (RsaEncrypter, error) {

|

||||||

|

block, _ := pem.Decode(key)

|

||||||

|

if block == nil {

|

||||||

|

return nil, ErrPublicKey

|

||||||

|

}

|

||||||

|

|

||||||

|

pub, err := x509.ParsePKIXPublicKey(block.Bytes)

|

||||||

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

|

||||||

|

switch pubKey := pub.(type) {

|

||||||

|

case *rsa.PublicKey:

|

||||||

|

// OAEP overhead: 2*hash_size + 2

|

||||||

|

hashSize := sha256.New().Size()

|

||||||

|

return &rsaOAEPEncrypter{

|

||||||

|

rsaBase: rsaBase{

|

||||||

|

bytesLimit: (pubKey.N.BitLen() >> 3) - 2*hashSize - 2,

|

||||||

|

},

|

||||||

|

publicKey: pubKey,

|

||||||

|

}, nil

|

||||||

|

default:

|

||||||

|

return nil, ErrNotRsaKey

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

type rsaOAEPDecrypter struct {

|

||||||

|

rsaBase

|

||||||

|

privateKey *rsa.PrivateKey

|

||||||

|

}

|

||||||

|

|

||||||

|

func (r *rsaOAEPDecrypter) Decrypt(input []byte) ([]byte, error) {

|

||||||

|

return r.crypt(input, func(block []byte) ([]byte, error) {

|

||||||

|

return rsa.DecryptOAEP(sha256.New(), rand.Reader, r.privateKey, block, nil)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

|

||||||

|

func (r *rsaOAEPDecrypter) DecryptBase64(input string) ([]byte, error) {

|

||||||

|

if len(input) == 0 {

|

||||||

|

return nil, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

base64Decoded, err := base64.StdEncoding.DecodeString(input)

|

||||||

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

|

||||||

|

return r.Decrypt(base64Decoded)

|

||||||

|

}

|

||||||

|

|

||||||

|

type rsaOAEPEncrypter struct {

|

||||||

|

rsaBase

|

||||||

|

publicKey *rsa.PublicKey

|

||||||

|

}

|

||||||

|

|

||||||

|

func (r *rsaOAEPEncrypter) Encrypt(input []byte) ([]byte, error) {

|

||||||

|

return r.crypt(input, func(block []byte) ([]byte, error) {

|

||||||

|

return rsa.EncryptOAEP(sha256.New(), rand.Reader, r.publicKey, block, nil)

|

||||||

|

})

|

||||||

|

}

|

||||||

|

|||||||

@@ -1,7 +1,12 @@

|

|||||||

package codec

|

package codec

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"crypto/ecdsa"

|

||||||

|

"crypto/elliptic"

|

||||||

|

"crypto/rand"

|

||||||

|

"crypto/x509"

|

||||||

"encoding/base64"

|

"encoding/base64"

|

||||||

|

"encoding/pem"

|

||||||

"os"

|

"os"

|

||||||

"testing"

|

"testing"

|

||||||

|

|

||||||

@@ -58,3 +63,78 @@ func TestBadPubKey(t *testing.T) {

|

|||||||

_, err := NewRsaEncrypter([]byte("foo"))

|

_, err := NewRsaEncrypter([]byte("foo"))

|

||||||

assert.Equal(t, ErrPublicKey, err)

|

assert.Equal(t, ErrPublicKey, err)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func TestOAEPCryption(t *testing.T) {

|

||||||

|

enc, err := NewRsaOAEPEncrypter([]byte(pubKey))

|

||||||

|

assert.Nil(t, err)

|

||||||

|

ret, err := enc.Encrypt([]byte(testBody))

|

||||||

|

assert.Nil(t, err)

|

||||||

|

|

||||||

|

file, err := fs.TempFilenameWithText(priKey)

|

||||||

|

assert.Nil(t, err)

|

||||||

|

defer os.Remove(file)

|

||||||

|

dec, err := NewRsaOAEPDecrypter(file)

|

||||||

|

assert.Nil(t, err)

|

||||||

|

actual, err := dec.Decrypt(ret)

|

||||||

|

assert.Nil(t, err)

|

||||||

|

assert.Equal(t, testBody, string(actual))

|

||||||

|

|

||||||

|

actual, err = dec.DecryptBase64(base64.StdEncoding.EncodeToString(ret))

|

||||||

|

assert.Nil(t, err)

|

||||||

|

assert.Equal(t, testBody, string(actual))

|

||||||

|

|

||||||

|

// empty input

|

||||||

|

actual, err = dec.DecryptBase64("")

|

||||||

|

assert.Nil(t, err)

|

||||||

|

assert.Nil(t, actual)

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestOAEPBadKeys(t *testing.T) {

|

||||||

|

_, err := NewRsaOAEPEncrypter([]byte("bad"))

|

||||||

|

assert.Equal(t, ErrPublicKey, err)

|

||||||

|

|

||||||

|

_, err = NewRsaOAEPDecrypter("nonexistent")

|

||||||

|

assert.Error(t, err)

|

||||||

|

|

||||||

|

// valid PEM but invalid private key content

|

||||||

|

badPem, err := fs.TempFilenameWithText("-----BEGIN RSA PRIVATE KEY-----\nYmFk\n-----END RSA PRIVATE KEY-----")

|

||||||

|

assert.Nil(t, err)

|

||||||

|

defer os.Remove(badPem)

|

||||||

|

_, err = NewRsaOAEPDecrypter(badPem)

|

||||||

|

assert.Error(t, err)

|

||||||

|

|

||||||

|

// not PEM content at all

|

||||||

|

notPem, err := fs.TempFilenameWithText("not a pem file")

|

||||||

|

assert.Nil(t, err)

|

||||||

|

defer os.Remove(notPem)

|

||||||

|

_, err = NewRsaOAEPDecrypter(notPem)

|

||||||

|

assert.Equal(t, ErrPrivateKey, err)

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestOAEPEncrypterParseError(t *testing.T) {

|

||||||

|

// valid PEM block but invalid public key content

|

||||||

|

badPub := []byte("-----BEGIN PUBLIC KEY-----\nYmFk\n-----END PUBLIC KEY-----")

|

||||||

|

_, err := NewRsaOAEPEncrypter(badPub)

|

||||||

|

assert.Error(t, err)

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestOAEPEncrypterNonRsaKey(t *testing.T) {

|

||||||

|

ecKey, err := ecdsa.GenerateKey(elliptic.P256(), rand.Reader)

|

||||||

|

assert.Nil(t, err)

|

||||||

|

derBytes, err := x509.MarshalPKIXPublicKey(&ecKey.PublicKey)

|

||||||

|

assert.Nil(t, err)

|

||||||

|

ecPem := pem.EncodeToMemory(&pem.Block{Type: "PUBLIC KEY", Bytes: derBytes})

|

||||||

|

_, err = NewRsaOAEPEncrypter(ecPem)

|

||||||

|

assert.Equal(t, ErrNotRsaKey, err)

|

||||||

|

}

|

||||||

|

|

||||||

|

func TestOAEPDecryptBase64Error(t *testing.T) {

|

||||||

|

file, err := fs.TempFilenameWithText(priKey)

|

||||||

|

assert.Nil(t, err)

|

||||||

|

defer os.Remove(file)

|

||||||

|

dec, err := NewRsaOAEPDecrypter(file)

|

||||||

|

assert.Nil(t, err)

|

||||||

|

|

||||||

|

_, err = dec.DecryptBase64("not-valid-base64!!!")

|

||||||

|

assert.Error(t, err)

|

||||||

|

}

|

||||||

|

|||||||

@@ -81,6 +81,10 @@ func (c *Cache) Del(key string) {

|

|||||||

delete(c.data, key)

|

delete(c.data, key)

|

||||||

c.lruCache.remove(key)

|

c.lruCache.remove(key)

|

||||||

c.lock.Unlock()

|

c.lock.Unlock()

|

||||||

|

|

||||||

|

// RemoveTimer is called outside the lock to avoid performance impact from this

|

||||||

|

// potentially time-consuming operation. Data integrity is maintained by lruCache,

|

||||||

|

// which will eventually evict any remaining entries when capacity is exceeded.

|

||||||

c.timingWheel.RemoveTimer(key)

|

c.timingWheel.RemoveTimer(key)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

|||||||

@@ -164,6 +164,7 @@ func (tw *TimingWheel) Stop() {

|

|||||||

|

|

||||||

func (tw *TimingWheel) drainAll(fn func(key, value any)) {

|

func (tw *TimingWheel) drainAll(fn func(key, value any)) {

|

||||||

runner := threading.NewTaskRunner(drainWorkers)

|

runner := threading.NewTaskRunner(drainWorkers)

|

||||||

|

|

||||||

for _, slot := range tw.slots {

|

for _, slot := range tw.slots {

|

||||||

for e := slot.Front(); e != nil; {

|

for e := slot.Front(); e != nil; {

|

||||||

task := e.Value.(*timingEntry)

|

task := e.Value.(*timingEntry)

|

||||||

@@ -177,6 +178,8 @@ func (tw *TimingWheel) drainAll(fn func(key, value any)) {

|

|||||||

}

|

}